In a discussion with a smart guy from NVIDIA he showed me Thinkmate.com as a nice source for Supermicro Servers (and Grid Cards). The visible prices for the Grid cards were kind of “interesting” – so I thought a comparison of the somewhere public visible list prices for Grid cards from the different OEMs might be interesting. Prices might change in the future. In addition you need for some cards a separate Cable Kit. All Prices from 13.02.2018. (Don’t forget – that are list prices!)

| OEM | M10 | M60 | P4 | P40 |

| Dell | $5,050.56 | $9,821.84 | $4,020.79 | $12,305.72 |

| Supermicro | $2,299.00 | $4,399.00 | $1,899.00 | $5,699.00 |

| HP | $3,999.00 | $8,999.00 | $3,699.00 | $11,599.00 |

| Cisco | $8,750.00 | $16,873.00 | $6,999.00 | $21,000.00 |

| Lenovo | ?? | $9,649.00 | ?? | ?? |

If you know a source for the missing Lenovo List Prices – please let me know so I can add them.

Sources:

Dell:

M10: http://www.dell.com/en-us/shop/accessories/apd/490-bdig

M60: http://www.dell.com/en-us/work/shop/accessories/apd/490-bcwc

P4: http://www.dell.com/en-us/work/shop/accessories/apd/489-bbcp

P40: http://www.dell.com/en-us/work/shop/accessories/apd/489-bbco

Supermicro:

https://www.thinkmate.com/system/superserver-2029gp-tr

HP:

https://h22174.www2.hpe.com/SimplifiedConfig/Welcome

=> ProLiant DL Servers => ProLiant DL300 Servers => HPE ProLiant DL380 Gen10 Server => Configure (just choose one) => Graphics Options

When you have a look at Grid-Versioning you might getting confused by the Official Version Number, the Hypervisor Driver-Number and the VM-Driver Number. Especially when you have multiple systems and cannot upgrade all systems at the same time or would like to test new versions, it’s quite helpful to know which Grid Version is which Hypervisor- and VM-Driver version. Thus I created a simple table to show the corresponding versions:

| GRID Version | Hypervisor-Driver | VM-Treiber (Windows) | VM-Treiber (Linux) |

| 4.0 | 367.43 | 369.17 | |

| 4.1 | 367.64 | 369.61 | |

| 4.2 | 367.92 | 369.95 | |

| 4.5 | 367.123 | 370.17 | |

| 4.6 | 367.124 | 370.21 | |

| 5.0 | 384.73 | 385.41 | 384.73 |

| 5.1 | 384.99 | 385.90 | 384.73 |

| 5.2 | 384.111 | 386.09 | 384.111 |

| 5.3 | 384.137 | 386.37 | 384.137 |

| 6.0 | 390.42 | 391.03 | 390.42 |

| 6.1 | 390.57 | 391.58 | 390.57 |

| 6.2 | 390.72 | 391.81 | 390.75 |

| 6.3 | 390.94 | 392.05 | 390.96 |

| 7.0 | 410.68 | 411.81 | 410.71 |

| 7.1 | 410.91 | 412.16 | 410.92 |

For my book about NVIDIA GRID I created a Data comparison table of the two graphics cards P4 and P40. From my point of view the P4 is a real underestimated card. After my presentation at NVIDIA GTC Europe this year NVIDIA added the P4 to their comparison slides – thanks for listening. During DCUG TecCon I showed my Data comparison table and got some real positive feedback. So I thought it’s worth to publish the comparison in a blog post:

|

P40 (3X) |

P4 (6X) |

|

|

GPU |

3X Pascal |

6X Pascal |

|

CUDA Cores (per Card) |

3840 |

2560 |

|

CUDA Cores (Total) |

11520 |

15360 |

|

Frame Buffer (Total) |

72GB |

48GB |

|

H.264 1080p30 Streams (Total) |

75 |

150 |

|

Max vGPU (Total) |

72 |

48 |

|

Max Power (per Card) |

250W |

75W |

|

Max Power (Total) |

750W |

450W |

|

Price per Card (in $)* |

11149,99 |

3649,99 |

|

Price for all Cards (in $)* |

33449,97 |

21899,94 |

*NVIDIA doesn’t publish list prices – so I picked list prices from one server vendor.

Current server generations allow either the usage of six P4 or three P40. As you can see the price of the P4 is much lower. On the other side the available CUDE Cores on the P40 are higher. Thus one user could get a higher peak performance on a P40 card. On the other hand you have more CUDE cores available on all P4 cards compared to all P40 cards. With the P4 cards you are limited to 48 vGPU Instances – the P40 allows up to 72.

There is another fact most people don’t think about. Citrix uses H264 in it’s HDX 3D Pro Protocol. When a user now connects to a VM one stream is created for every monitor he is using. In many offices nearly every user already has two monitors – resulting in two streams. If you now connect 72 users to a P40 and every user has two monitors – it would require 144 streams. However there are only 75 available. This can lead to a reduced performance for the user. In contrast the P4 has 150 streams available – for 48 vGPU Instances.

In addition the maximum power of all six P4 is 300W lower than three P40 resulting in lower cooling requirements and power needs for the Data Center.

I hope you like the comparison – if you think something is wrong or missing please contact me.

During the last days I was facing an interesting error. Starting a published application from a Windows Server 2016 with a NVIDIA GRID vGPU only showed a black screen:

Interestingly it was possible to move the black window around and even maximize it. But it stayed black. The taskbar Icon instead was correctly shown:

Other users had the problem that when they started a published desktop they had black borders on the side:

To fix this they needed to change the window size of the desktop.

There was no specific graphics setting configured for the server – except from this one:

Use the hardware default graphics adapter for all Remote Desktop Services sessions

The same problem is described in this Citrix Discussion. It’s also mentioned that LC7875 fixes the problem. Thus I created a Case at Citrix and requested this hotfix. The hotfix contains a changed icardd.dll (C:\Windows\System32). After installing the fix the problem was gone.

As you might have noticed I only wrote a few blog posts during the last month. One of the reasons was that I was (secretely) working on a book about Virtual Desktops using a Graphics card. The ones which joined Thomas Remmlinger and my session @NVIDIA GTC Europe today already know that this book is now finished and available at Amazon. Only one thing – sorry for that – currently it’s only available in German. Hope you like it – if (not) please tell ![]() .

.

If you plan to deploy a Server 2016 e.g. as an RDSH or XenApp VDA you might want to remove the pinned Server Manager from the Start Menu for new users. I don’t know why, but Microsoft does not offer a GPO setting for this. This blog post from Clint Boessen describes how to remove the pinned Server Manager Icon from Server 2012. For Server 2016 the Shortcut that needs to be removed is in a different Path:

C:\ProgramData\Microsoft\Windows\Start Menu\Programs

After deleting the shortcut new users don’t get a pinned Server Manager in their Start Menu. It is not necessary to remove the shortcut from the following path:

C:\ProgramData\Microsoft\Windows\Start Menu\Programs\Windows Administrative Tools

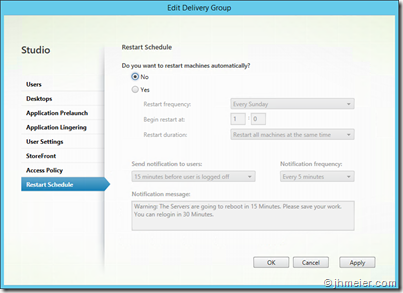

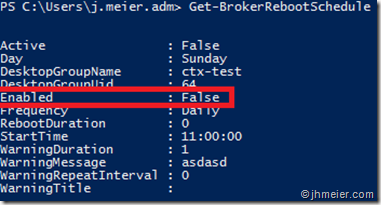

After upgrading Citrix XenApp / XenDesktop to 7.12 our scheduled reboots for the Server VDA Delivery Groups did not work any longer. Citrix provided us with a Hotfix (that’s also included in 7.13) – but even that did not fix the problem. To fix it we had to recreate the reboot schedule. If you now think you can just remove the reboot settings using the Studio you are wrong:

To see that the reboot schedule still exists you need to open a PowerShell and enter the following commands:

Add-PSSnapin Citrix*

Get-BrokerRebootSchedule

You will now get a list off all configured reboots. The only thing changed is that the reboot schedule for your delivery group was Disabled:

For completely removing the reboot schedule enter the following command:

Remove-BrokerRebootSchedule -DesktopGroupName "DESKTOP GROUP NAME"

After that you can recreate your schedule using the Studio (or PowerShell). That’s it.

If you passthrough a 3DConnexion SpaceMouse to a Citrix HDX 3D Pro Session you expect that everything is working like on a local computer. Unfortunately that’s not always the truth. It might happen that the left side of Keys (Ctrl, Alt, ESC,…) are not working. Everything else instead is working fine. When you look at the release notes of XenDesktop 7.11 you find the following fix:

Customized functions for a 3DConnexion SpaceMouse might not work in a VDA session.

[#LC4797]

When you now think – ok let’s upgrade to 7.11 (or one version later) and the problem is gone – that might help you – but did not help us. Thus we created a ticket at Citrix. They told me that the following Registry-Key also needs to be added:

Path: HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\services\picakbf

Key: Enable3DConnexionMouse

Type: REG_DWORD

Value: 1

After adding the key you need to reboot your VDA. Now the 3DConnexion Mouse should fully work. As far as I know this is not documented anywhere else….

You might have read my last article about a crashing XenServer 7 when it’s used with an Intel Xeon v4 and a NVIDIA M60 in a Dell R730. The same problem happens when you use a M10 from NVIDIA. Furthermore I have heard that the same problem happens with Fujitsu Servers.

Our workaround until now was to replace the Xeon v4 CPUs with v3 ones (with less performance). Luckily Citrix Engineering (and Support) found the issue for the problem. It’s related to the Intel PML (Page Modification Logging) function. You can find some detailed information on this side from Intel.

Thus we disabled this function in XenServer and replaced the v3 CPUs with v4 ones. After that we started stress testing the system. No error occurred. Thus we placed users on the system. The system was still running. Until now we had no more crashes while the function is disabled.

To disable the function you need to modify the grub.cfg – those of you who have more than 512GB Ram in their hosts should already now that process.

Open a console session and either switch to /boot/grub (Bios) or /boot/efi/EFI/xenserver (UEFI) – depending on the boot setting of your server.

Open grub.cfg with vi:

vi grub.cfg ![]()

Now you need to add the following command to the multiboot2 /boot/xen.gz line:

ept=no-pml

After that the file should look like this (don’t remove iommu… if you have more than 512GB Ram): ![]()

Save and close the file with :wq and reboot the host. That’s it. Now the function is disabled and the problem is gone. A big thanks to Antony Peter (Citrix Support) and Anshul Makkar (Citrix Engineering) for taking so much time to look at our environment and debugging the problem with me.

During the last weeks we had to – or better are – facing a strange problem with Dell R730 / Dell R7910, NVIDIA M60 and Citrix XenServer 7.0. It’s not solved until know. I will update this Blog-Post when there are new findings (or solutions). Before I come to the problem I would like to give you some background information’s about the whole project:

Our Company gets a new CAD program. For the training we decided to use Virtual Desktops with Graphics acceleration instead of classical Workstations. Round about 40 users are trained at the same time. Thus we discussed the topic with Dell and at the end we bought (on recommendations from Dell) the following Hardware (twice):

Dell 7910 Rack Workstations

512GB RAM

2X Intel Xeon E5-2687W v4 (3.00GHz)

1X NVIDIA M60

We did some initial testing’s and everything worked without any problems. With ~10 Key Users we “simulated” a training to check how many users we get on one machine and if we have any problems. Luckily a small NVIDIA vGPU profile (M60-0B – yes B) was fast enough. So we can handle up to 64 users with the two Workstations – more than enough for our trainings.

Due to some problems in the project the trainings had to be delayed – fine for us because we thought everything was working and we had nothing to do in this area. In the meantime we checked the current CAD Hardware. This check showed that around 30 clients must be replaced before the new CAD software goes live – because it will not work on these clients. Another 30 need to be replaced during the year. A lot of discussions and calculations started if we now start to provide productive CAD users also a Virtual Desktop instead of a classical physical one. Again we had discussions with Dell and ended with the following configuration:

Dell R730

576GB RAM (512 for VMs – 64 for Hypervisor)

2X Intel Xeon E5-2667 v4 (3.20GHz)

1X NVIDIA M60

The system was ordered three times (for redundancy). At this point it was not clear how many users we can get on one Server – we planned to use these systems to do more tests and find the best NVIDIA vGPU Profile which fulfills the user requirements.

After the hardware arrive it was directly installed. At the same time the trainings started – our problems as well. After a few training days suddenly one of the R7910 froze – no machines or the Hypervisor (XenServer 7) itself reacted any longer. We had to hard reboot the whole system. These continued to happen on both systems in the next days. There were no Crash Dumps on the XenServer or Event-Logs in the iDRAC Server Log. Thus we decided to activate one of the R730 to get a more stable training environment and investigate more relaxed the problem. However – they also had a problem. Instead of freezing there was a hard Hardware-Reset after some time. In the iDRAC Server Logs the following errors appeared:

Fri Dec 02 2016 10:43:16 CPU 2 machine check error detected.

Fri Dec 02 2016 10:43:10 An OEM diagnostic event occurred.

Fri Dec 02 2016 10:43:10 A fatal error was detected on a component at bus 0 device 2 function 0.

Fri Dec 02 2016 10:43:10 A bus fatal error was detected on a component at slot 6.

Fri Dec 02 2016 10:45:24 CPU 1 machine check error detected.

Fri Dec 02 2016 10:45:24 Multi-bit memory errors detected on a memory device at location(s) DIMM_A3.

Fri Dec 02 2016 10:45:24 Multi-bit memory errors detected on a memory device at location(s) DIMM_B1.

Fri Dec 02 2016 10:45:24 A problem was detected related to the previous server boot.

Furthermore the boot screen showed the following message:

Ok – Time for a Ticket at Dell. The support person told me that there are two known problems when the M60 is used:

- The M60 is connected with a wrong cable and does not get enough power

- The power cable of the M60 is in the Airflow of the card

We checked both. The results were quite promising:

R7910:

A wrong cable was used – so the M60 can’t get enough power. The wrong one looks like this:

The correct one has two plugs instead of one. It’s an CPU-8-pin auxiliary power cable (see Nvidia M60 Product Brief – page 11):

The side with one plug is connected to the M60.

Of course this cannot be directly connected to the power-plug on the riser card. Thus you also need the following cable:

As you can see this has also two plugs on one side – connect these two plugs with the two from the correct cable and the white with the rise card.

Another thing you need to make sure is that the power cable is not in the Airflow of the M60 cooling. It looks like it often happens that the cable is not under the M60 (like shown in the picture) and instead behind it.

The rest of the cable fits next to the card.

Furthermore Dell advised us to change the Power Supply Options. You can find them on the iDRAC: Overview => Hardware => Power Supplies. On the right side there is now an Option Power Configuration.

They suggested changing the Redundancy Policy to Not Redundant and Hot Spare to Disable.

After correcting this it first looked like it fixed the problem – unfortunately it did not. After a few days in production on of the 7910 froze again. Later I discussed this topic again with another Dell Engineering. After that we changed the setting back to the following:

Redundancy Policy:

You can find a detailed description of the settings in the iDRAC User Guide on page 161.

At this point Dell thought they have another customer with the same configuration (Dell R730 + Nvidia M60 + XenServer 7). Therefore they started to check for other hardware differences between both systems. They found the following differences and replaced our ones with the one of the other customer:

Power Supply:

We had one from Lite-On Technology.

In addition the other had one from Delta Electronics INC.

Furthermore their motherboard had a different revision number. It was changed to one with the same revision. (sorry didn’t make a picture of both). To make sure even one M60 was replaced.

No replacements made any difference. Later we figured out that the other customer was using XenServer 6.5 and not 7. During all the tests I found that rebooting 32VMs several times (often just one reboot was enough) led to the problem – thus it was reproducible ![]()

In the meantime the case was escalated. Dell did many Hardware replacements (really uncomplicated) but nothing changed. Interestingly even now it didn’t look like Dell Engineering was involved. We created a case at NVIDA and contacted System Engineers from NVIDA and Citrix. In parallel I created a post on the NVIDIA Grid Forums – especially BJones gave some helpfull feedback.

After discussing the problem we did a few more tests:

Testing different vGPU Drivers:

| Host | VM |

| 361.45 | 362.56 |

| 367.43 | 369.17 |

| 367.64 | 369.71 |

The Problem was always the same

| Test | Result |

| Switch to Passthrough GPU | Problem solved |

| Remove Driver from Guest | Problem solved |

| Use different vGPU | Problem exists |

| Reduce Memory to 512GB (from 576GB) | Host freezes instead of crash |

| Reduce Memory to 128GB | Problem solved |

| Replace Xeon v4 CPU with v3 | Problem solved |

| Use XenServer 6.5 | Problem solved |

There had been more tests – but to be honest I don’t remember every detail.

One of the things we also changed was to add the iommu=dom0-passthrough parameter to the xen boot-line. Although we didn’t have the problem that the driver inside the VM did not start – the behavior changed a little bit. It first looked like the problem was fixed – but at the end only more reboots had been necessary to crash the system. After that a crash dump appeared on the XenServer. This had never happened before. Unfortunately the crash dump didn’t contain any useful information.

At the moment we are working with Citrix Engineering and Nvidia (although it’s mainly focused on Citrix because we don’t see the Nvidia driver included in the problem). One of the other thoughts was that a Microcode Patch from Intel could help to solve the problem. This patch is not included in the current Dell Bios. The current version is 0X1F – currently the Dell Bios has the 0X1E integrated. Fujitsu has updated his Bios at the end of December with 0X1F. We installed the update manually – but it didn’t change anything. (If I have some time later I will publish a separate blog post how to do that…).

Our current workaround is to use v3 CPUs. The performance is lower – but it was the option where we didn’t have to change anything in our environment (no reinstallation or reconfiguration) except the CPU.

Since yesterday we are running another test with v4 CPUs. We disabled a new feature from the CPU that was adopted in Xen 4.6 (=> XenServer 7). Until now we have no crashes *fingers crossed*. User tests will follow tomorrow.

One last (personal) thing at the end:

I really appreciate it that Dell did many Hardware-Replacements really fast and uncomplicated. Nevertheless it never felt like the Dell engineering was involved in the problem solution. Citrix and Nvidia Engineers asked us a lot of questions – but we didn’t receive any from Dell engineering.

The point I am more worried about is how Dell “continues” to support an environment with XenServer. During the discussions we often got the information like “We don’t support that because it’s not on the Citrix HCL” – interestingly on the XenServer HCL you can find the Dell R720 (a quite old system) in conjunction with the Nvidia M60. On the other hand the R730 is listed with the M10. Furthermore Dell send a R730 to Citrix for testing it and adding it to the HCL. The “normal” way I know is that the Hardware-Vendor does all the tests for the HCL and just sends Citrix the results. We have asked our Dell representatives for an official statement – hopefully we will receive that soon and it’s positive about XenServer Support from Dell (and they don’t try to replace XenServer with VMWare…).