If you have a look at the Endpoint used in VDI / SBC environments, you often see Thin Clients. There are several Thin Client vendors around. Most companies see them as a Hardware and Software delivery – although some of them now position as a software solution.

Beside that most of them rely on a customized Linux system where they integrate the Client Version from the VDI / SBC vendors – e.g. the Citrix Workspace App (formerly Receiver). Unfortunately, this leads to two issues:

- Linux Clients have often less features compared to the windows version

- The vendor of the actual VDI / SBC Client software does not support the (custom) implementation of the Thin Client vendor. Thus, you must install a native Linux on a hardware an reproduce the issue on this device with the official client software.

On the other hand, the Thin Client vendors also often offer a Windows based version of their devices. But they are often limited in performance and manageability. Beside that it would mean to implement another device type in your environment (which of course require a separate configuration).

During my previous job we had some issues with Linux based Thin Clients that could not be fixed. As we also didn’t like the limitations of the Windows Thin Clients we thought: Why aren’t we creating our own Windows based Thin Client – based on a small form factor device. These devices often already have a Windows license included. This means that you sometimes need less / cheaper licenses from Microsoft for connecting to your VDI / SBC environment – I can’t tell you details about this as I am no licensing expert (and honestly, I don’t try to get one). Furthermore, these devices are often quite powerful that they can easily handle H.264 decoding and even H.265. In some remoting scenarios this is important for a smooth user experience.

The requirements to full fill had been assessable:

- User has no access to the local system itself (e.g. Desktop etc.)

- Automatic logon to the local device

- Browser with StoreFront Website is automatically started

- Peripheral devices can automatically be redirected to the Citrix Session (e.g. 3DConnexion Devices)

- Installation must be possible with current Software Deployment Tool

- Central Configuration

- Automatic start of Desktop when only one Desktop is assigned

- Desktop starts in Full screen mode

Some of you might now think: There are solutions on the market available that solve these issues like:

- ThinKiosk

- Citrix Desktop Lock

- Citrix WEM Transformer Mode

But these solutions have some points we didn’t like in our Scenario: They are either quite limited – e.g. Desktop Lock always starts the first available Desktop for the user. He can’t choose which Desktop is started when he has assigned multiple Desktops. The other solutions on the other side meant it would be necessary to implement / maintain / configure another software. Yes, these software solutions have their advantages – but the idea was to just use the available tools!

My first idea was to use the Windows 10 function Assigned Access. Unfortunately, it was only possible to publish modern apps with this solution and not classical ones like Internet Explorer. In the documentation (and if you google for it) you see that there are commands / script available to also publish classical apps – but that didn’t work for me.

So, I decided to look at other ways. I remembered that it was possible to assign a Custom User Interface with a GPO to Windows. My idea was to automatically logon the endpoint, start the Internet Explorer in Full Screen mode and open the StoreFront Website.

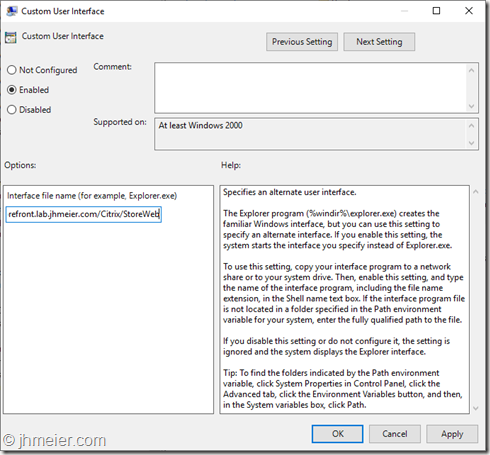

The first step was to create a new Group Policy named Windows Thin Client and assign it to the OU containing my test device. Inside the policy I configured the following setting to automatically launch the Internet Explorer instead of the Windows Explorer after Logon:

User Configuration => Policies => Administrative Templates => System => Custom User Interface

Interface File name

"c:\Program Files\Internet Explorer\iexplore.exe" -k https://storefront.lab.jhmeier.com/Citrix/StoreWeb

This starts the Internet Explorer and opens the StoreWeb page.

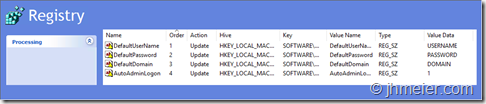

The next step is to enable the automatic user logon. Microsoft has documented the necessary registry keys here. You need to create the following registry keys:

Path:

HKEY_LOCAL_MACHINE\SOFTWARE\Microsoft\Windows NT\CurrentVersion\Winlogon

Name Type Data DefaultUserName REG_SZ USERNAME DefaultPassword REG_SZ PASSWORD DefaultDomain REG_SZ DOMAIN AutoAdminLogon REG_SZ 1

I added them to the same policy:

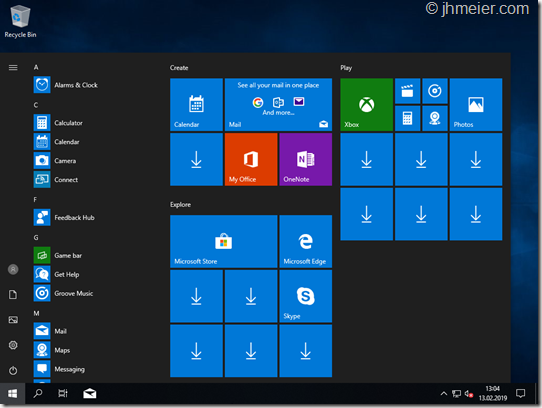

Time to power up the test-machine and look how it works so far. Test-System was Windows 10 LTSC (to keep the necessary upgrades low), an Anti-Virus-Tool and a current Citrix Receiver (Workspace App).

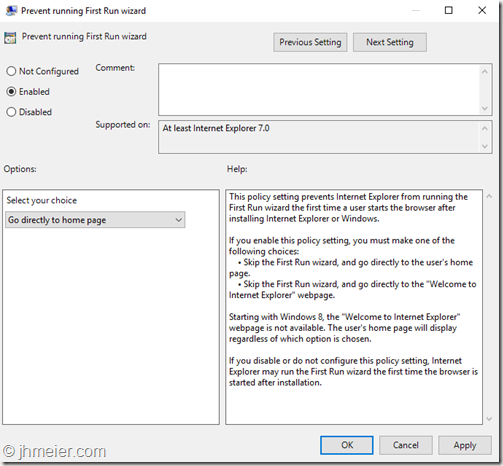

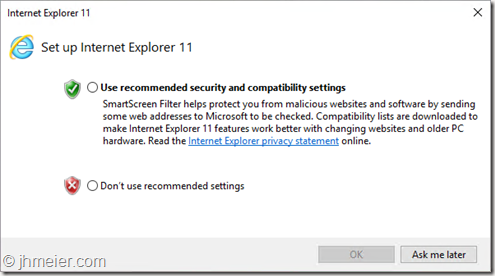

The system booted – user was automatically logged in – Internet Explorer was started – but also the Setup Internet Explorer Dialog popped up:

Ok – back to the GPO to disable the Wizard:

After closing the First Run Wizard Internet Explorer asked if I really want to run the Citrix Receiver Active X Plugin: ![]()

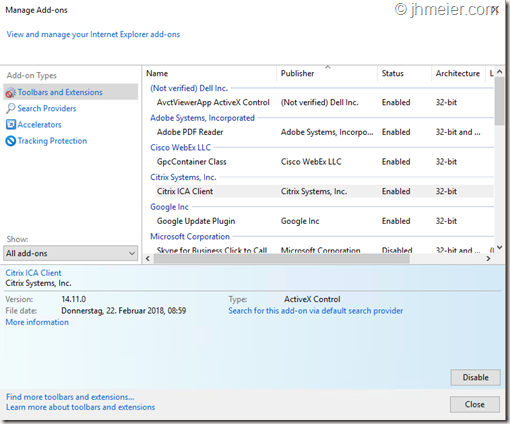

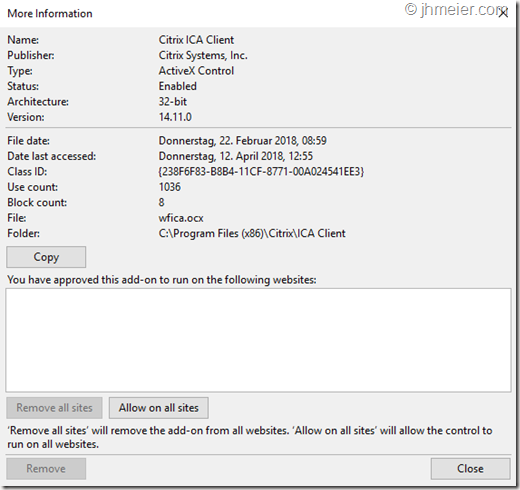

It is possible to automatically allow an Active X Plugin with a GPO setting – but therefore we need the Class ID of the addon. To find that you need to open Manage Add-ons from the Internet Explorer Settings Menu on the top right. Now select the Citrix ICA Client Add-On and press More information on the bottom left to show the details.

Inside the details view you get information’s like Name, Publisher, etc. and also the Class ID. Use Copy to copy the details (including the class ID) to the clipboard.

Open Notepad and insert the content. We now just need to copy the Class ID. After copying the Class ID to the clipboard switch back to your GPO. The Class ID for the Citrix ICA Client is:

{238F6F83-B8B4-11CF-8771-00A024541EE3}

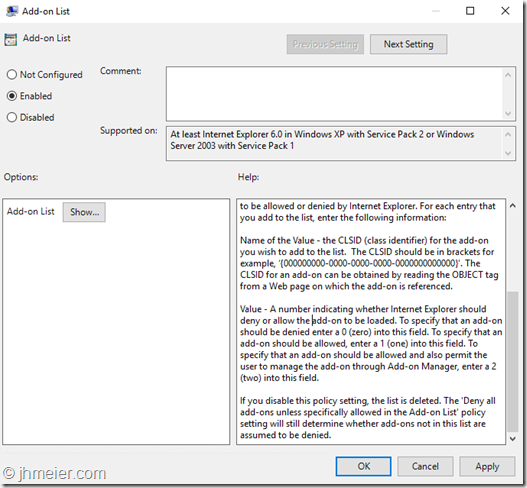

You now need to open the following setting:

Computer => Administrative Templates => Windows Components => Internet Explorer => Security Features => Add-on Management => Add-On List

Here you can now configure Add-ons as allowed. Press Show….

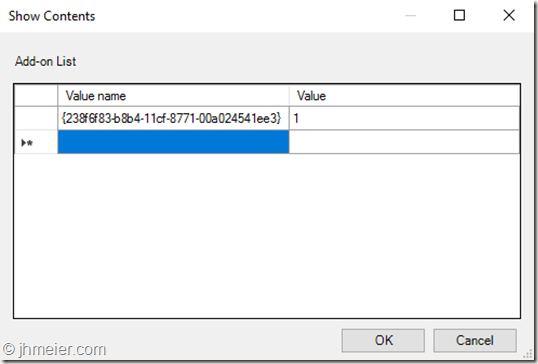

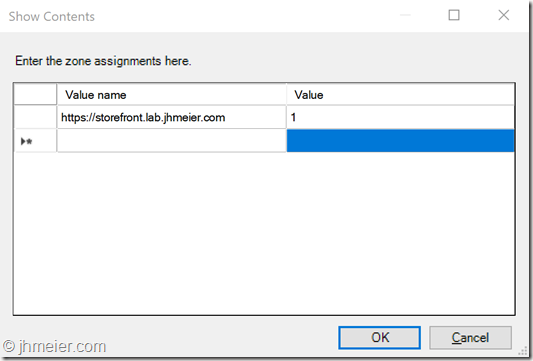

… to open the Add-on configuration. As the Value name paste the copied Class ID. For the Value enter “1”. (1 enables the Add-on and this cannot be changed by the user.)

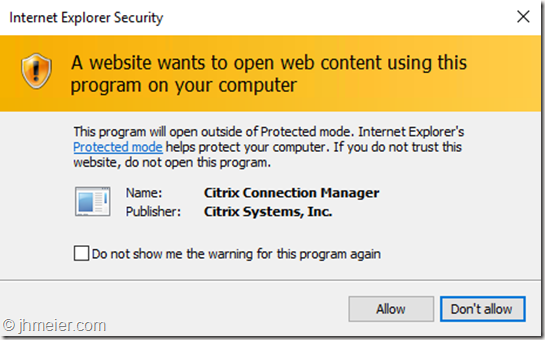

Now it was time to logon to the StoreFront Website and launch a Desktop. But before the Desktop was started another message appeared:

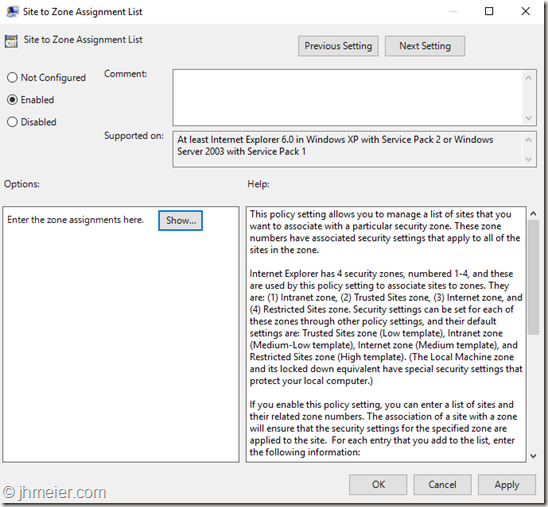

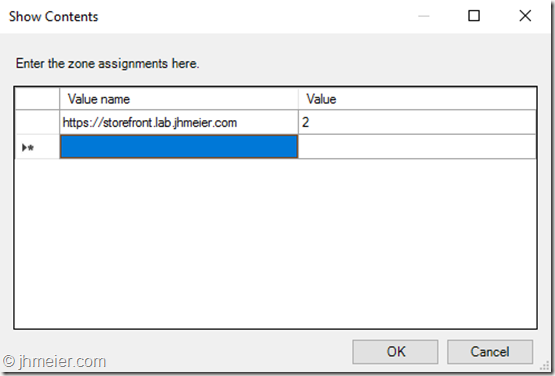

Ok. Back to the GPO (again) and assign the StoreFront Website to the correct Security Zone. You need to open the following setting:

Computer => Administrative Templates => Windows Components => Internet Explorer => Internet Control Panel => Security Page => Site to Zone Assignment List

… to open the Configuration. Value Name is the URL (starting with https://) of your StoreFront Website. The Value is 2 (Trusted Sites).

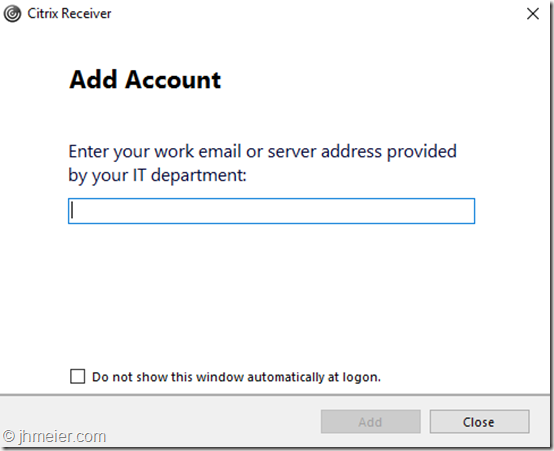

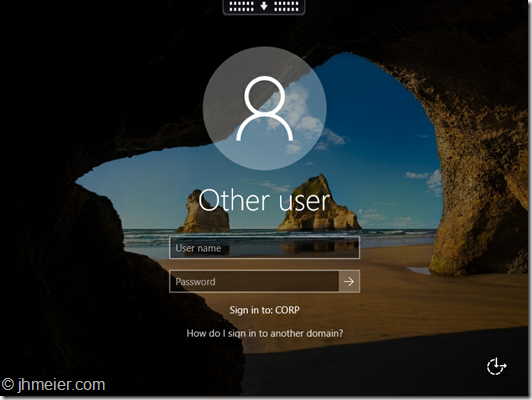

Time to reboot the test client, delete the user profile and logon again as a user to see how it now behaves. After logging in the Internet Explorer was started and the StoreFront Website was opened – but also the following Dialog appeared:

The user can disable the message with Do not show this window automatically at logon, but it would be a manual step on every new client. In theory there is a Citrix Receiver Policy setting available that disables this message – it did not work on my test client. I looked for another way. In CTX135438 there are several options described to disable the message. Next to the GPO it is also possible to install the Receiver with a Parameter to disable the message. I changed the software deployment to install the receiver with the following parameter:

CitrixReceiver.exe /ALLOWADDSTORE=N

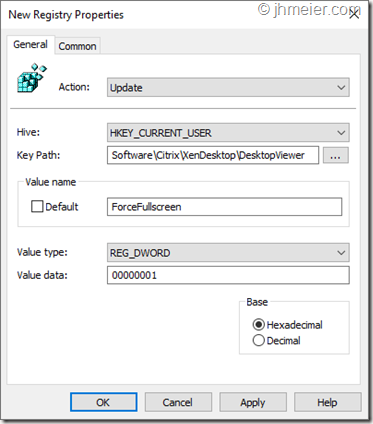

After a reinstallation of the system the message was gone. Time for some more tests. After logging in a user to StoreFront a Desktop was automatically launched when only one was assigned – as expected but it was not launched in full screen mode. When switching to full screen, logging of the desktop and connecting it again the desktop was launched in full screen. The only thing is that this would require a user interaction – the user had been used that the desktop automatically starts in full screen. I found several methods that describe how to force full screen mode for started desktops. They did not work. After some more searching I stumbled across this discussion. Inside this discussion the following registry key was mentioned:

Path:

HKEY_CURRENT_USER\Software\Citrix\XenDesktop\DesktopViewerType:

DwordName

ForceFullscreenValue

00000001

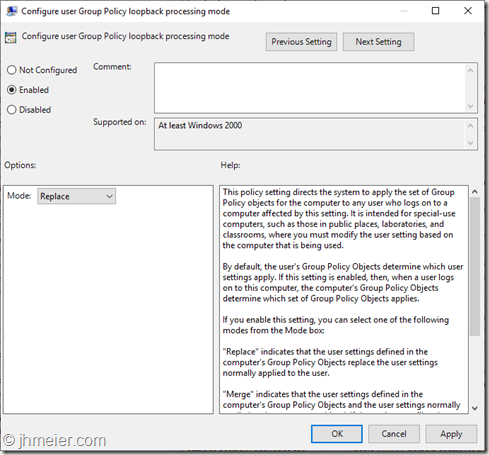

As this is a user setting I first enabled the loopback mode as the GPO was assigned to the Computer object and not the user:

Then I added this entry to the GPO:

Deleting the user profile and another test – yes worked as expected. User was automatically logged on to the client, StoreFront Website was opened, user can login, published Desktop is started in full screen. I did some more tests and everything worked fine. Then I locked the client – but not the remote session itself was locked – the local client was locked. That means that the user would need to know the password of the user that automatically logged in to the client. It means that every user could unlock every other client. Not a good idea. Beside that I started to think about it. When the remote session was locked another user would be able to disconnect the session. If the automatic logout of the StoreFront website did not already happen he would be able to restart the user session without knowing his password. Not a good way. I had to change the idea of automatically logon the client. The user needs to login to the client and instead an automatic login to StoreFront was required. I removed the following registry settings from the GPO to disable the automatic client login:

Path:

HKEY_LOCAL_MACHINE\SOFTWARE\Microsoft\Windows NT\CurrentVersion\Winlogon

Name Type Data DefaultUserName REG_SZ USERNAME DefaultPassword REG_SZ Password DefaultDomain REG_SZ DOMAIN AutoAdminLogon REG_SZ 1

The next step was to create a new StoreFront Website that allows automatic user logons. So, I opened the StoreFront console, selected my store and switched to the Receiver for Web site area to add another one (Manage Receiver for Web Sites => Add).

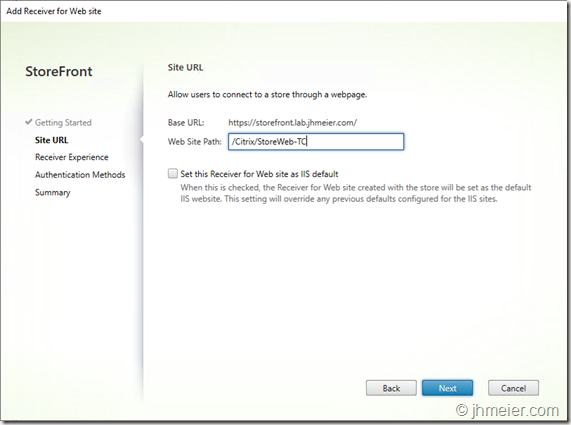

The first step is to enter the name / path of the web site.

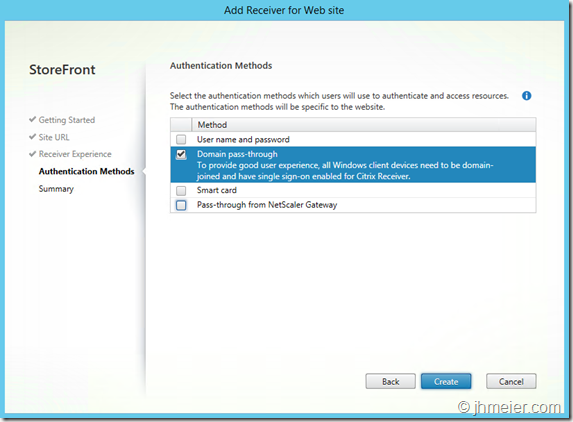

After that it was necessary to configure Domain pass-through as the Authentication Method:

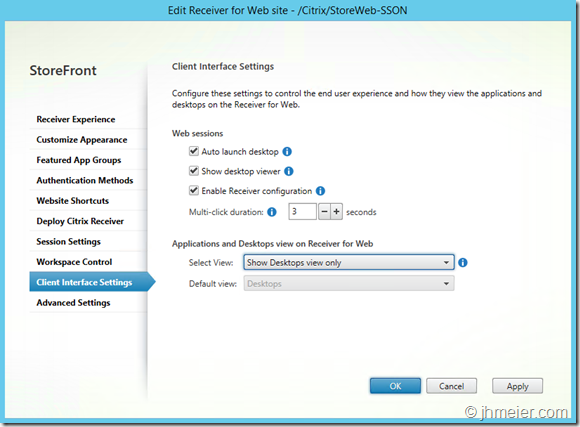

As the user should only be able to launch a desktop and not an application I opened the settings of the new web site. Under Client Interface Settings I changed the Select View to Show Desktops view only.

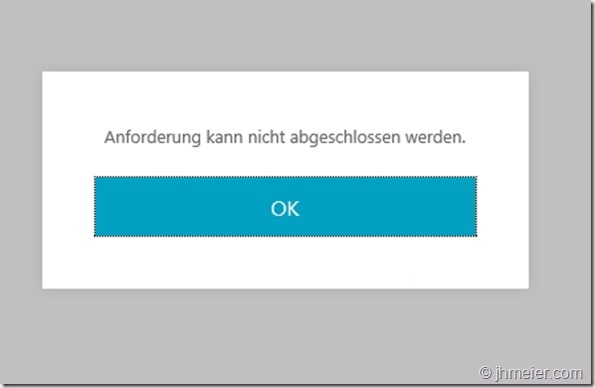

After changing the URL in the GPO to the new web site I logged in with a user to the client. The new website was opened and the following error message appeared:

Cannot complete your request

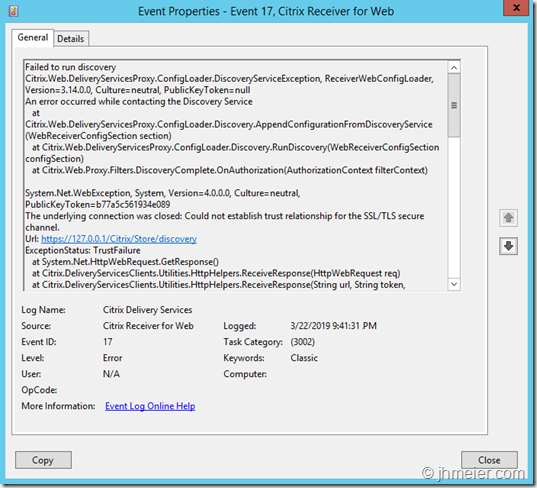

On the StoreFront server I found the following Event.

Source: Citrix Receiver for Web

Event ID: 17

Level: ErrorFailed to run discovery

Url: https://127.0.0.1/Citrix/Store/discovery

Exception Status: TrustFailure

As there was a domain certificate assigned to the StoreFront ISS website with the domain name it was not surprising that the connection to the local IP address fails with a Trust error.

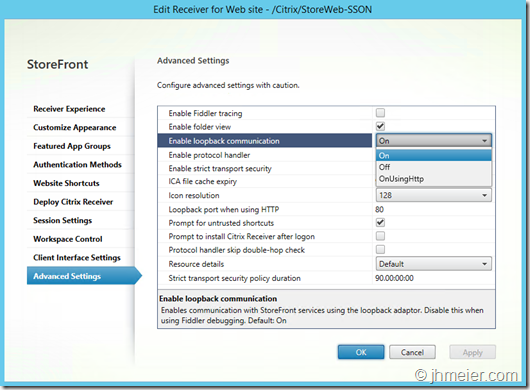

To fix this I went back to the settings of the new create StoreFront Website. Under Advanced Settings I changed the Enable loopback communication to OnUsingHttp.

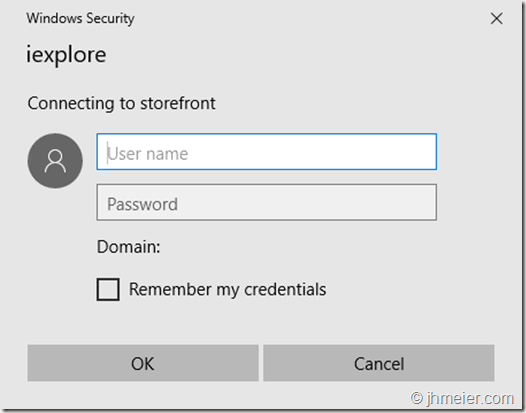

After that the error was gone – but the user was prompted to login:

To fix this I had to adjust the zone assignment of the StoreFront Website to Intranet Zone instead to Trusted Sites. Another solution would have been to enable the setting Automatic logon with current username and password for the zone Trusted Sites.

After this change the StoreFront Website was automatically logged on and I was able to start a published Desktop. But now the published desktop required a separate login instead of using the local username / password.

Time to add the Receiver ADM/ADMX Templates to our configured GPO. Inside the Receiver Settings (Computer Configuration => Citrix Components => Citrix Receiver => User Authentication) you find the following setting:

Local user name and password

Inside this you need to enable the following two settings thus the Receiver can automatically logon the user to the published desktop:

Enable pass-through authentication

Allow pass-through authentication for all ICA connections

(This requires you to configure XML Trusts on your delivery controller if you didn’t use that in the past – see CTX133982. Furthermore check if you installed the Receiver with the Single-Sign-On-Module – parameter /includeSSON during the installation. For Receiver Version 4.4 or higher this parameter should also be enough – thus you don’t need to enable the policy any longer – but haven’t tested that.)

That’s it – the user was now able to logon and start a published Desktop without any further logons required.

Honestly this is just the start – there are some other points you should have a look at before deploying the system to productive users:

- Use the Receiver Policy to connect USB devices automatically to the Published Desktop (Generic USB Remoting)

- Enable Kerberos Authentication for Receiver if required (Kerberos Authentication)

- System Settings eg:

- Anti-Virus

- Disabling of Registry Editor

- Disable the local Task Manager (to prevent the user to kill the Full screen Browser)

- System Hardening

Hope it gives you an idea how you can create your “own” Windows based “Thin Client” with a GPO.

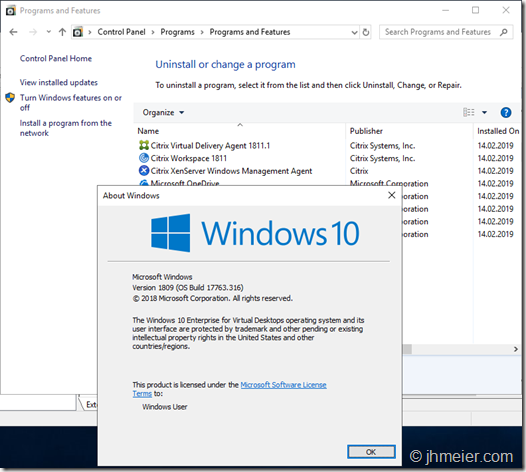

As many of you know Microsoft integrated a special Enterprise edition in the Insider Preview 17713:

Windows 10 Enterprise for Remote Services

If you search for this, you only find a few blog posts about this version – most focus on Microsoft Windows Virtual Desktops (WVD). The actual content is mostly only showing multiple RDP session at the same time on a Windows 10 VM – like you know it from the Microsoft Remote Desktop Session Host (RDSH) (or to keep the old name: Terminal Server). Only Cláudio posted two post during the last days about this topic which lead to some interesting discussions. You can find them here and here.

When using Office 365 and other programs there are some limitations when running them on RDSH – often it’s even not supported. When now Windows 10 allows multiple users to connect to one VM they have the full Windows 10 experience and on the other side they are still sharing one VM (like with RDSH).

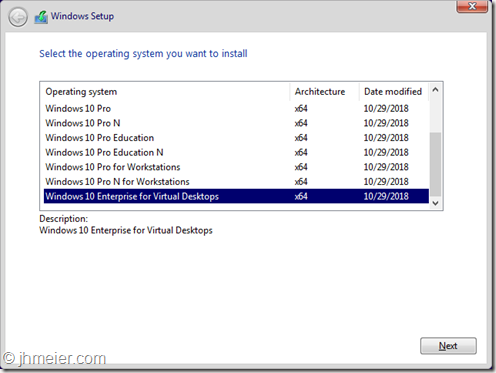

When booting an official Windows 10 1809 Iso I saw that there is still Windows 10 Enterprise for Virtual Desktops available. As I didn’t find any blog posts having a look at this version I decided to have a look myself.

[Update]

Christoph Kolbicz posted the following on Twitter – thus you can also use other ISOs of Windows 10:

If you installed Enterprise and want to get #WVD, you can also simply upgrade it with this key: CPWHC-NT2C7-VYW78-DHDB2-PG3GK – this is the KMS Client key for ServerRdsh (SKU for WVD). Works with jan_2019 ISO.

[/Update]

Disclaimer:

I am not a licensing expert and quite sure some of the following conflicts with license agreements. During the tests in my Lab I only wanted to figure out what’s possible and where the limitations are. Beside that some of the things described are not supported (and I bet will never be supported) – but I like to know what’s technical possible ![]()

Installing Windows 10 Enterprise for Virtual Desktops and logging in

Ok let’s get to the technical part. I connected an 1809 Iso, booted a VM and got the following Windows-Version selection. After selecting Windows 10 Enterprise for Virtual Desktops the normal Windows Installer questions followed.

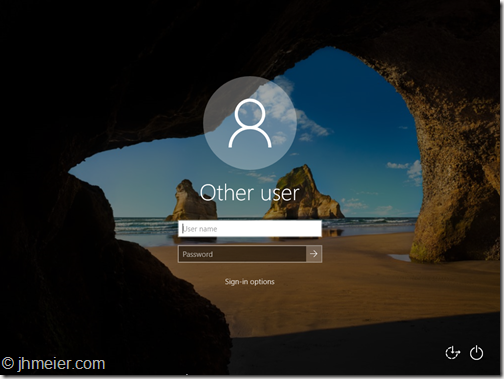

After a reboot I was presented with this:

No question for domain join or user – just a login prompt. So, I started to search and only found one hint on twitter (about the Insiders Build) that the only options are to reset the password with a tool or add a new user. Before you are wondering: Yes it’s possible to create another Administrator Account for Windows without having login credentials. As I already tested that in the past (and didn’t want to test out which password reset tool fits) I decided to take that way.

[Update]

Jeremy Stroebel gave me the following hint on Twitter – this way you can skip the steps below to create a local Administrator-User and continue at Multiple RDP-Connections – Not Domain Joined.

Easier, boot to safe mode and it logs right in… then add your user.

[/Update]

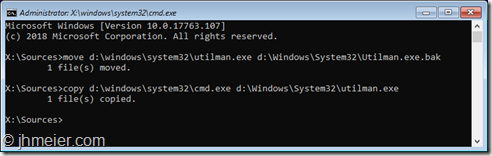

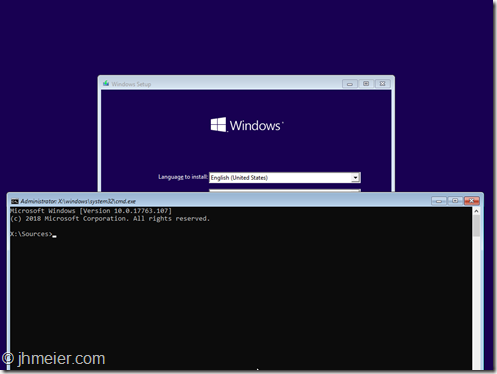

Ok time to boot another time from the ISO and open the Command Prompt (press Shift + F10 when the setup shows up).

Now we need to replace the Utility Manager (from the normal Login-Screen) with the cmd.exe. The Utility Manager from the Login-Prompt is always started with Admin-Rights….

To replace the Utility Manager with a cmd enter the following commands:

move d:\windows\system32\utilman.exe d:\windows\system32\utilman.exe.bak

copy d:\windows\system32\cmd.exe d:\windows\system32\utilman.exe

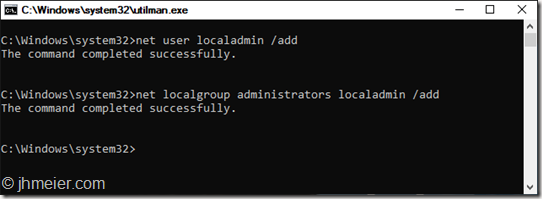

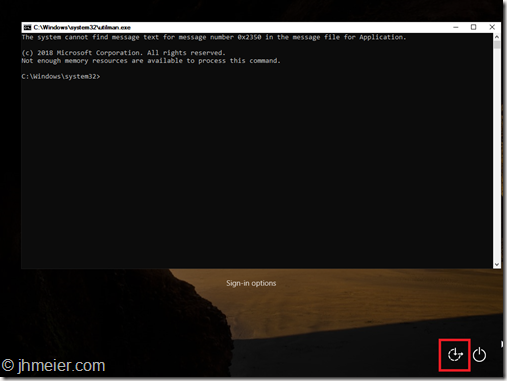

That’s it. Time to boot Windows again. Now press the Utility Manager Icon on the bottom right side. Voila: A command prompt (with elevated rights):

The next step is to create an account and add this one to the local admin group. Therefore, you need to enter the following commands:

net user username /add

net localgroup administrators username /add

And voila – you can now login with your just created user (without a password):

Multiple RDP-Connections – Not Domain Joined

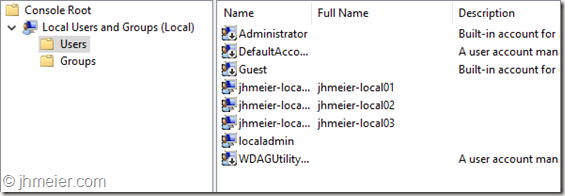

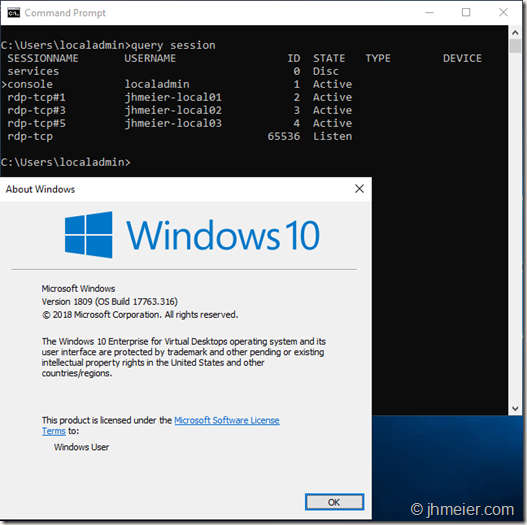

For my first tests I just wanted to connect multiple users to the machine without joining the VM to the AD to prevent impacts by policies or something else. I created three local users:

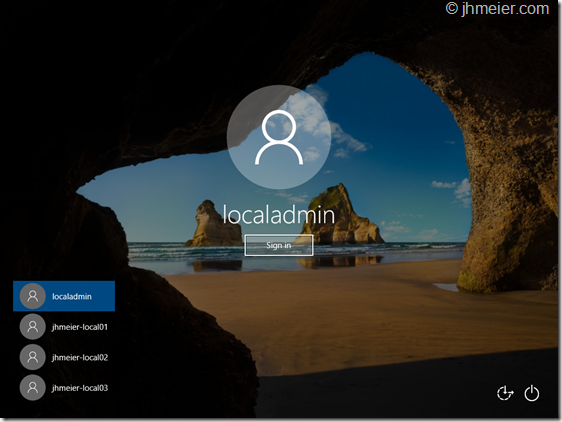

When I logged of my created Administrator all three had been available on the Welcome-Screen to Login:

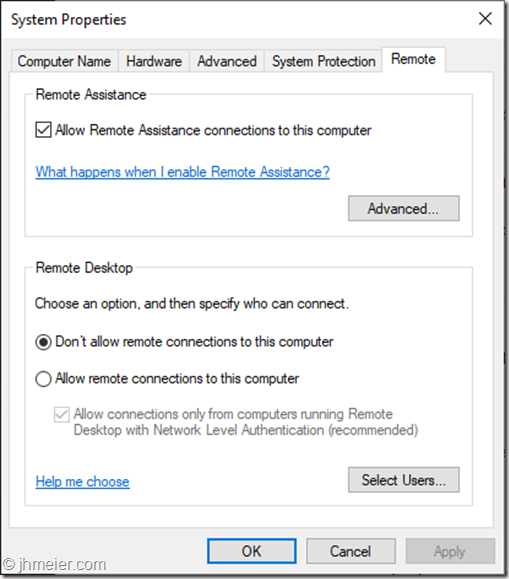

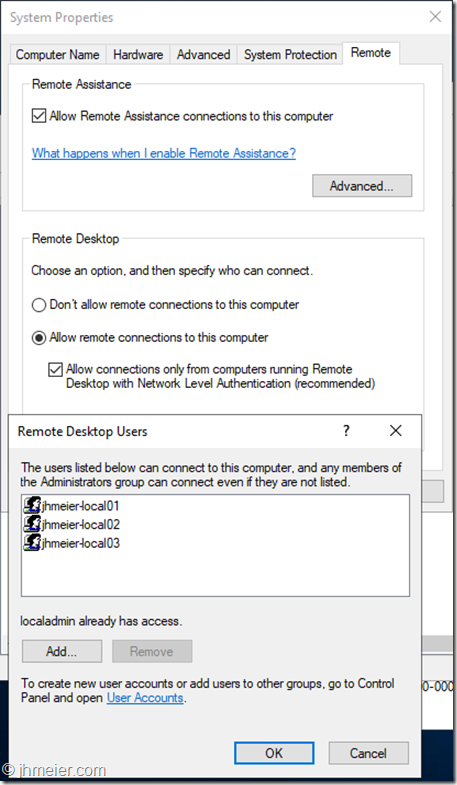

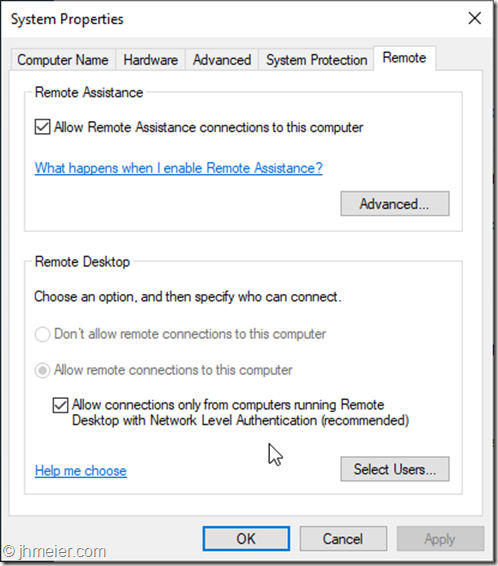

A RDP connection was not possible at this time. So let’s check the System Properties => Remote Settings. As you can see Remote Desktop was not allowed:

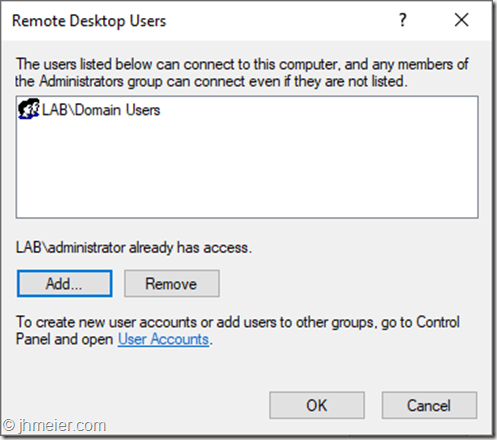

I enabled Allow remote connections to this computer” and added the three created users to the allowed RDP Users:

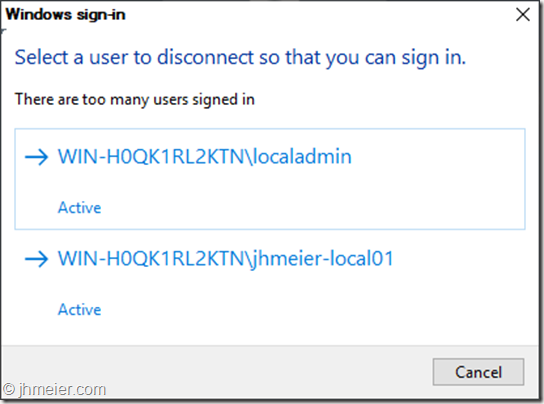

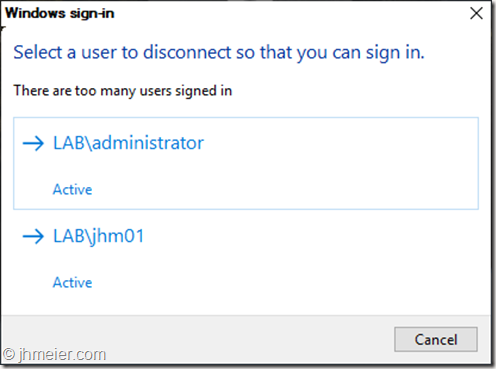

Time for another RDP-Test. The first user logged on successfully – but the second received the message that already two users are logged on and one must disconnect:

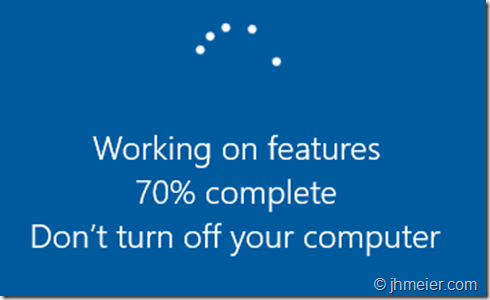

That was not the behavior I expected. After searching a little bit around and finding no solution I tried the old “Did you try a reboot yet?” method. And now something interesting happened. When shutting down this was shown:

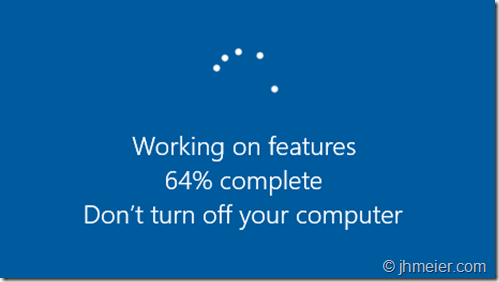

And after booting Windows showed this:

All Windows Updates had been installed before and I added no other Windows features, functions or any programs. So what was installed now?

The Welcome-Screen after the reboot also looked different:

Before you could see the created users and select one for login – now you need to enter username and password. Looks quite familiar to an RDSH host after booting or? ![]()

So logging in with the local admin again and directly this message was displayed:

Time for another RDP-Test. And guess? This time I was able to connect multiple RDP-Sessions at the same time:

So it looks like the “RDSH”-Role is installed when RDP was enabled and Windows is rebooted. Time for the next tests.

Multiple RDP-Connections – Domain Joined

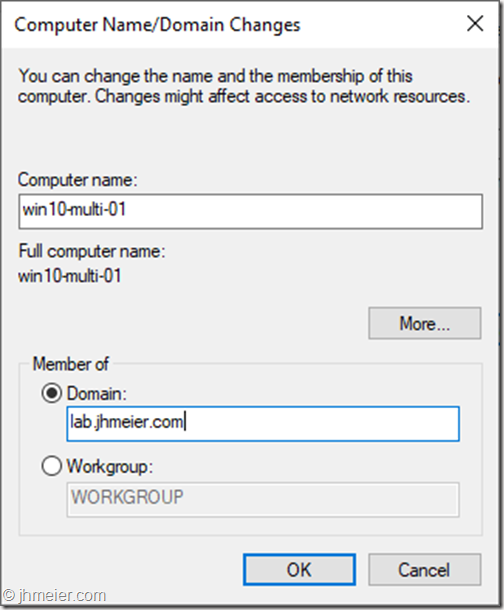

After everything worked fine I thought it’s time to test how everything behaves in a Domain. I reverted to a snapshot where I just had created the local Admin-User. I logged in and joined the machine to the domain:

After a reboot I logged in with a Domain Admin to Allow Remote Connections to this Computer.

Interestingly this was already enabled. Furthermore it was not possible to disable it any longer. There had been no policies applied to enable this setting – only the default Domain Policy was active.

So let’s go on and allow the Domain Users to connect.

Like before the logon of a third user was denied:

But as we already know a reboot is necessary to install the missing features. Like before magically some features are installed:

Now several domain users are able to connect to the Windows 10 VM at the same time using RDP.

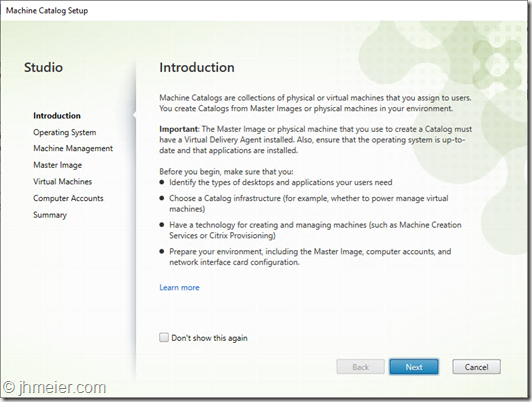

Citrix Components

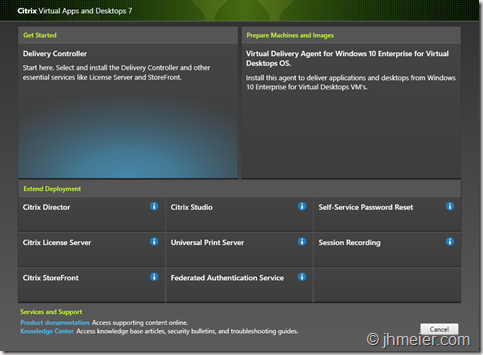

The next logical step for me was to try to install a Citrix Virtual Delivery Agent on the VM. So connect the Virtual Apps and Desktop 7 – 1811 iso and start the Component selection. But what’s that?

Next to the VDA it’s also possible to select all other roles – which are normally only available on a Server OS! (Just a quick reminder: None of the things I test here are designed or supported to work under such circumstances).

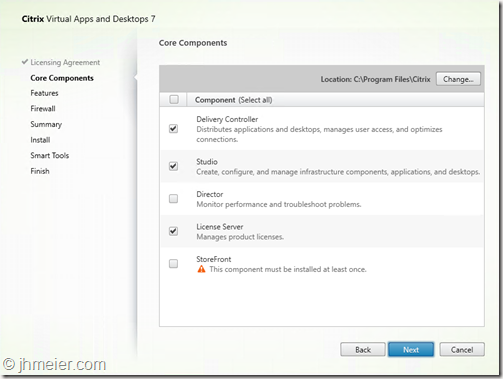

Delivery Controller

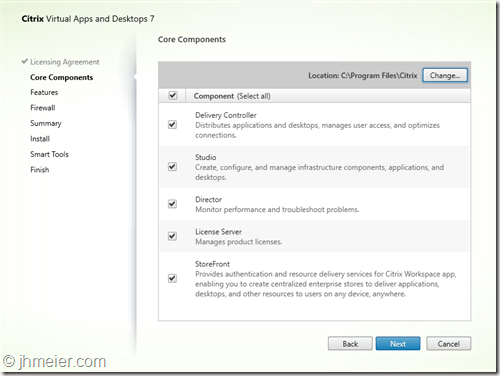

I couldn’t resist and selected Delivery Controller. After accepting the license agreement the Component selection appeared. I selected all available Components.

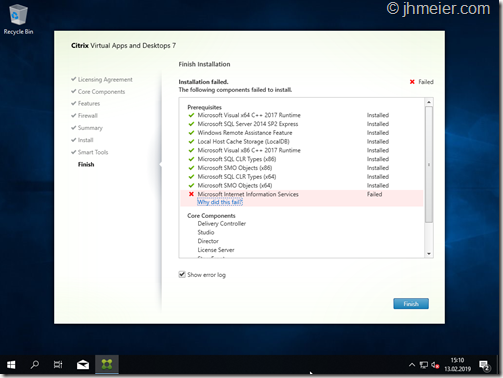

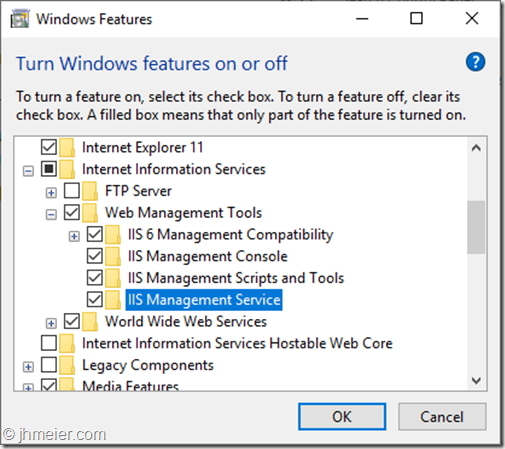

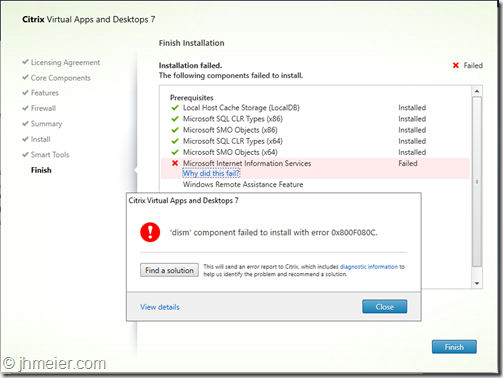

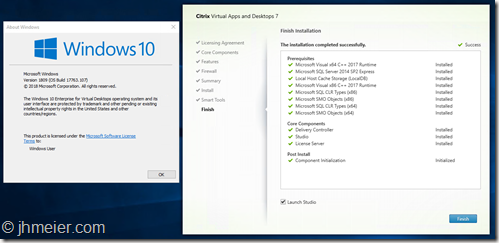

The first prerequisites are installed without issues – but the installation of the Microsoft Internet Information Service (IIS) failed. The error just showed something failed with DISM.

So I just installed the IIS with all available components manually.

But even after installing all available IIS components the Delivery Controller installation still failed at the point Microsoft Internet Information Service.

I decided to first have no deeper look into this issue and remove all components that require the ,IIS: Director and StoreFront.

The installation of the other components worked without any issues – as you can see all selected components are installed on Windows 10 Enterprise for Virtual Desktops.

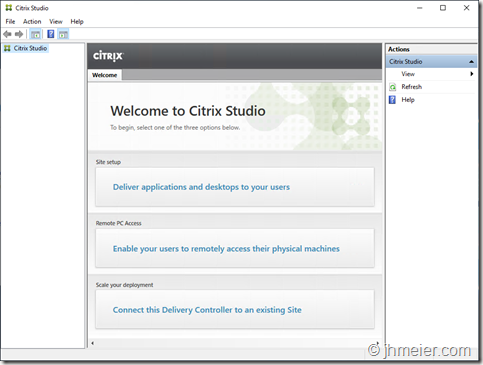

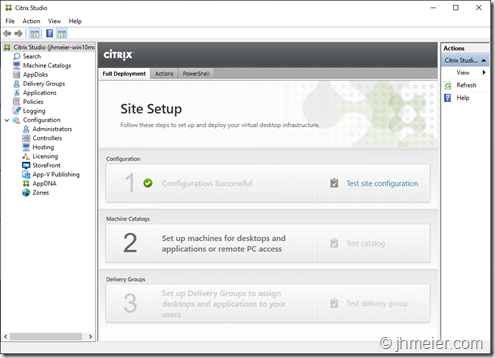

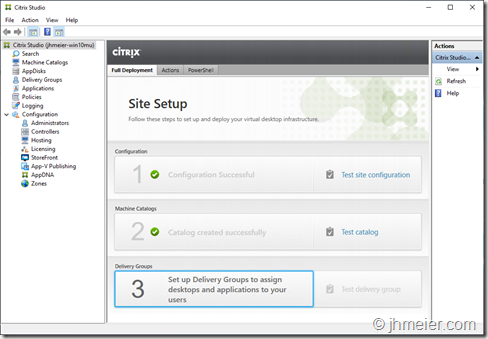

The Citrix Studio opens and asks for a Site Configuration – as on every supported Server OS.

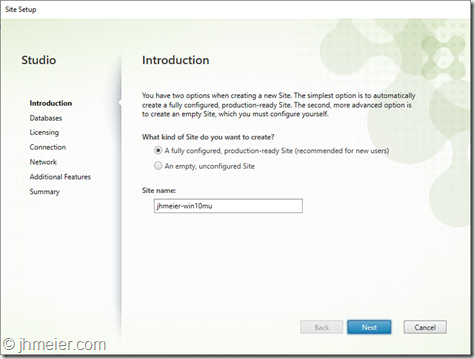

Time to create a Site on our Windows 10 Delivery Controller.

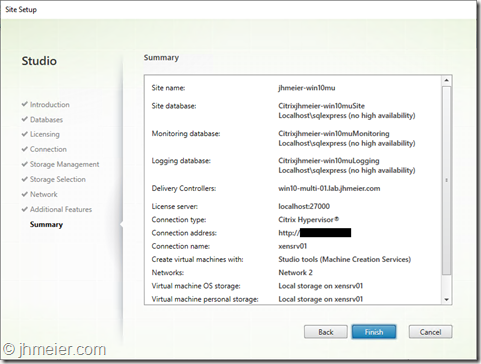

The Summary shows that the Site was successfully created.

And the Citrix Studio now shows the option to create Machine Catalog.

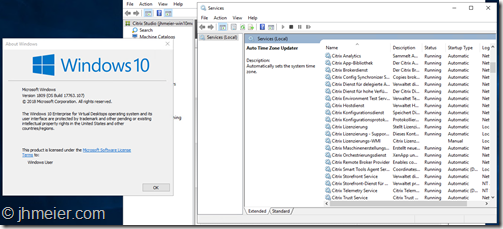

And a last check of the services: Everything is running fine.

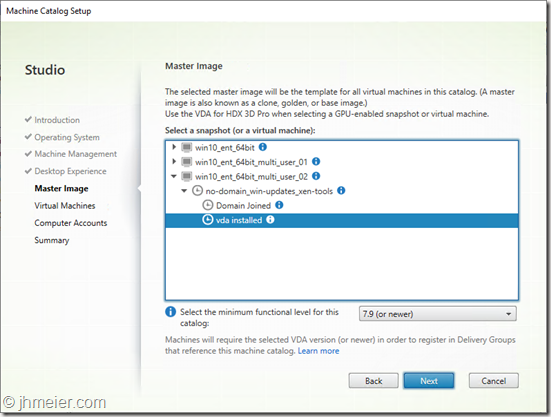

Virtual Delivery Agent

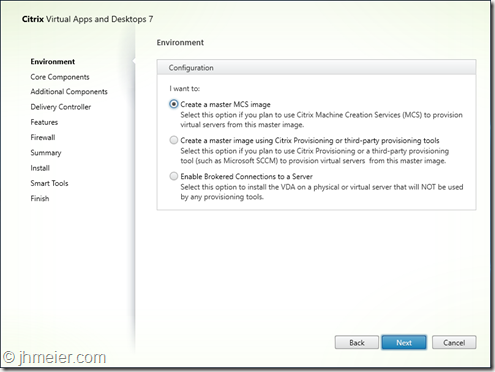

Before we now can create a Machine Catalog we need a Master VDA. So let’s create another VM with Windows 10 Enterprise for Virtual Desktops. Repeat the steps from above (Domain-Join with two reboots) and connect the Citrix Virtual Apps and Desktops ISO. This time we select Virtual Delivery Agent for Windows 10 Enterprise for Virtual Desktops. To be able to create multiple VMs from this Master-VM using MCS select Create a master MCS image.

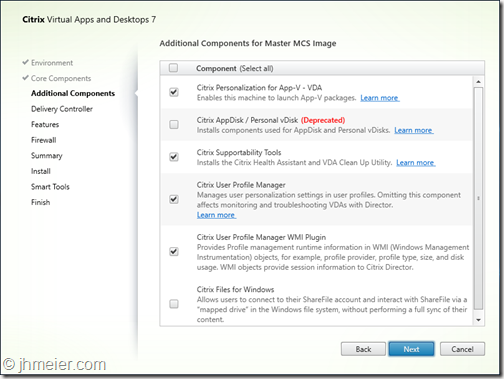

The next step is to select the Additional Components – like in every other VDA Installation.

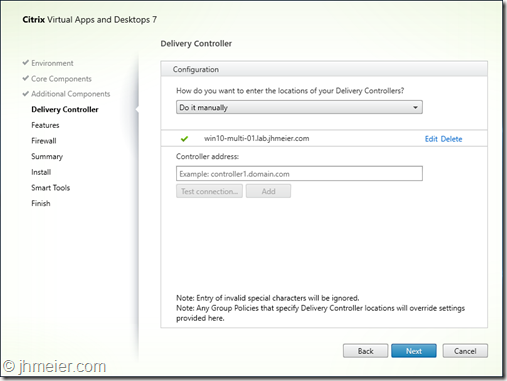

Now I entered the name of the Windows 10 VM where I previously installed the Delivery Controller.

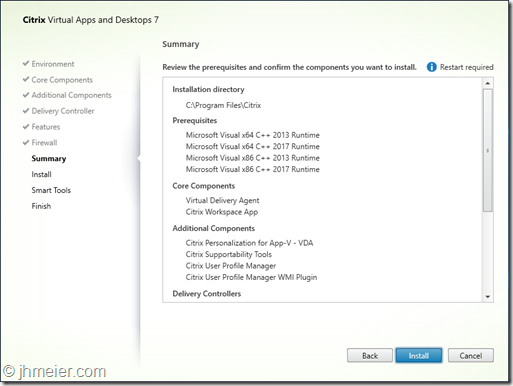

The summary shows the selected components and the Requirements.

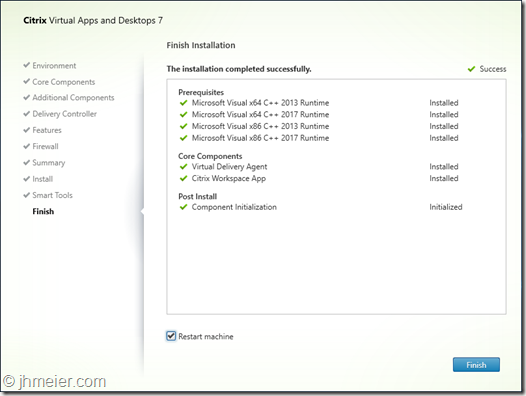

….and finished without any errors.

The VDA was successfully installed on Windows 10 Enterprise for Virtual Desktops.

Machine Catalog

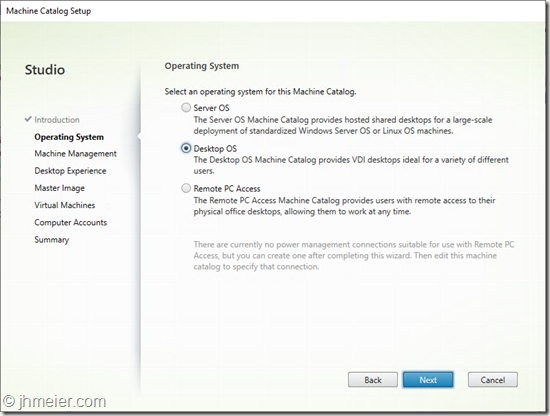

Time to deploy some VMs – so switch back to the Studio and start the Machine Catalog Configuration.

The first step is to select the Operation System. Normally this is easy: Server OS for Windows Server 2016 / 2019 – Desktop OS for Windows 10. But this time it’s tricky – we have a Desktop OS with Server OS functions. I first decided to take Desktop OS – although I thought Server OS might fit better.

Select the just created Master-VM and configure additional Settings like CPU, RAM, etc..

Finally enter a name for the Machine Catalog and let MCS deploy the configured VMs.

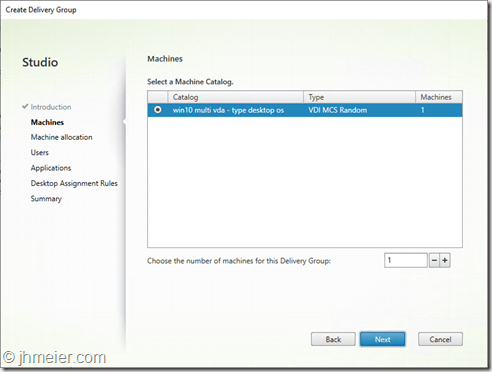

Delivery Group

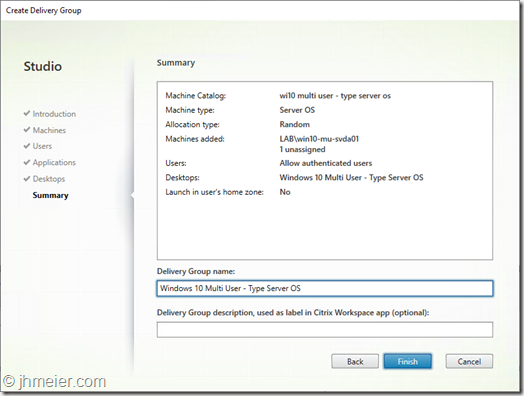

As we have now a Machine Catalog with VMs it was time to create a Delivery Group – thus we can allow users to access the VM(s).

I selected the created Machine Catalog…

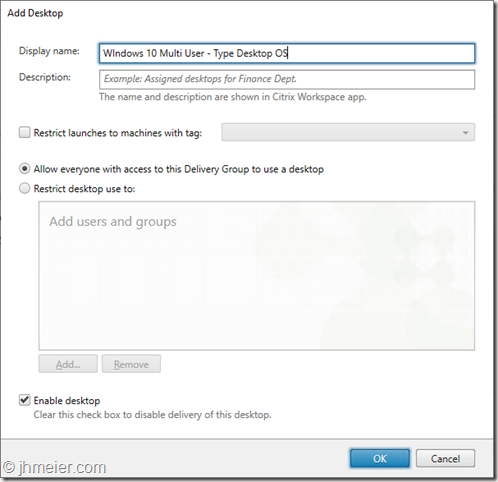

… and added a Desktop to the Delivery Group.

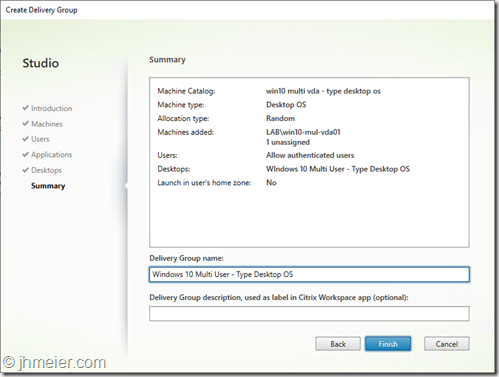

The Summary shows the configured settings.

Now we have everything ready on the Delivery Controller – just the user access is missing.

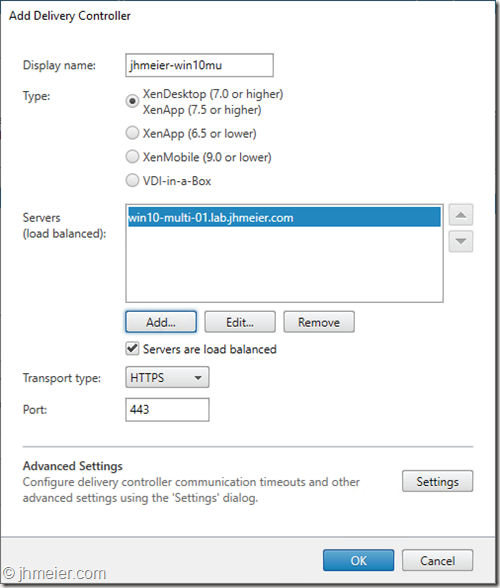

As the installation of StoreFront failed I added the Windows 10 Delivery Controller to my existing StoreFront Deployment.

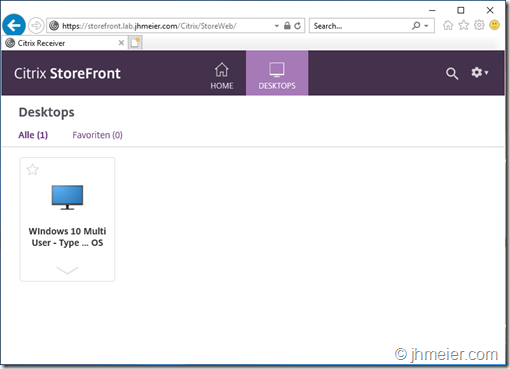

After logging in the User can see the just published Desktop. Unfortunately, the user is not able to start the published Desktop.

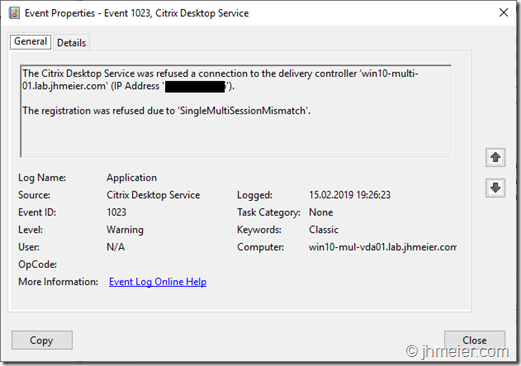

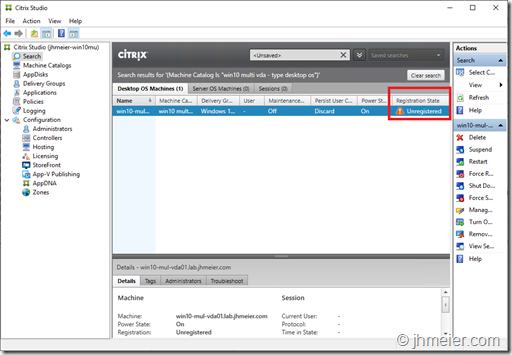

So back to the Delivery Controller. Oh – the VDA is still unregistered with a “!” in front of the Status.

Let’s check the VDA-Event-Log:

The Citrix Desktop Service was reused a connection to the delivery controller ‘win10-multi-01.lab.jhmeier.com.

The registration was refused due to ‘SingleMultiSessionMismatch’.

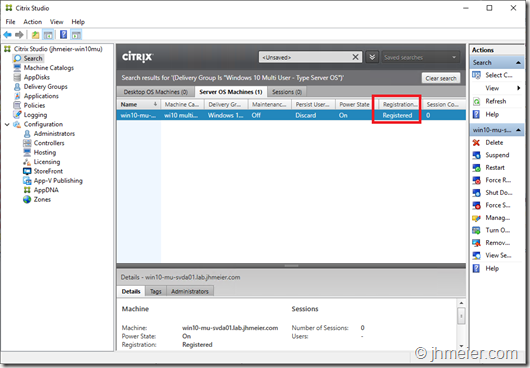

Looks like my feeling was correct that the Machine Catalog should have the Operating System Server OS. I created another Machine Catalog and Delivery Group – this time with the type Server OS.

Let’s boot a VDA and check the Status: Registered.

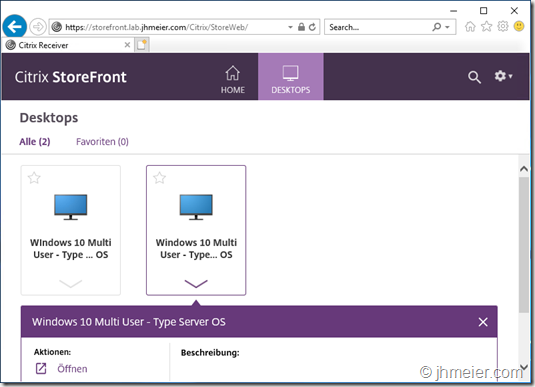

Time to check the user connections. After logging on to StoreFront the user now sees the second published Desktop – Type Server OS.

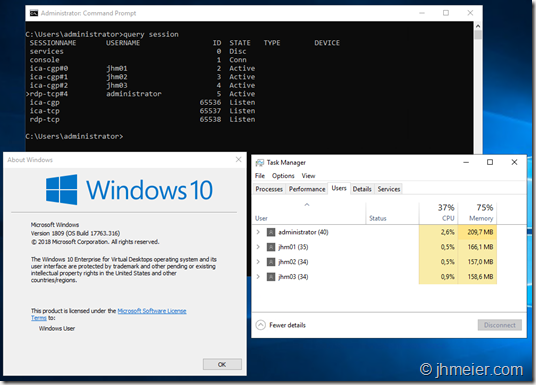

And this time the connection is possible. As you can see we have now multiple users connected using ICA to the same Windows 10 VDA.

At this point I would like to sum up what worked and what didn’t work until now:

What worked:

- Multiple RDP connections to one Windows 10 VM – Domain-Joined and not Domain-Joined

- Multiple ICA connections to Domain-Joined Windows 10 VDA

- Delivery Controller on Windows 10 (including Studio and License Server)

What did not work:

- Installation of Citrix Components that require an IIS (StoreFront and Director)

If the last point can be fixed it would be possible to create an “all-in” Windows 10 Citrix VM. Thus you could run all Citrix Infrastructure Components on one VM – not supported of cause but nice for some testing. Beside that it’s really interesting to see that next to the VDA also a lot of the Delivery Controller Components just work out of the box on Windows 10 Enterprise for Virtual Desktops.

When we look at the behaviour of the Citrix Components it looks like all Server features (including RDSH) that the Citrix installer uses to detect if it is a Desktop- or Server-OS are integrated into Windows 10 Enterprise for Virtual Desktops.

That’s it for now – I have some other things I will test with this Windows 10 Edition – if I find something interesting another blog post will follow ![]()

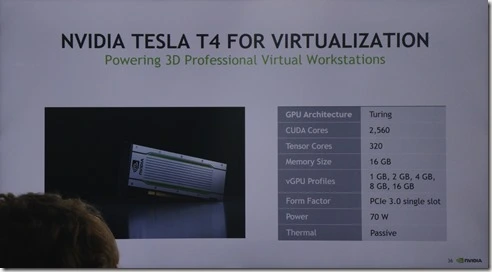

During NVIDIA GTC 2018 in Europe NVIDIA announced the new Turing T4 Graphics card. On Twitter this card got a lot of love as it looked like a good evolution of the (now) mainly suggested Tesla P4 (you remember last year it didn’t even appear on NVIDIA’s slides – now it’s their primary suggestion – thanks for listening NVIDIA). I saw the first details about the card in John Fannelis Presentation on the first day. They looked really promising. Here is the picture I with the shown card details (sorry for the head in front of it – I didn’t expect I need it for a blog post…):

You can find the P4 data in this PDF.

Let us compare the data of both cards that we have until now.

|

P4 |

T4 |

|

|

GPU |

Pascal |

Turing |

|

CUDA Cores |

2560 |

2560 |

|

Frame Buffer (Memory) |

8 GB |

16 GB |

|

vGPU Profiles |

1 GB, 2 GB, 4 GB, 8GB |

1 GB, 2 GB, 4 GB, 8GB, 16 GB |

|

Form Factor |

PCIe 3.0 single slot |

PCIe 3.0 single slot

|

|

Max Power |

75 W

|

70 W |

|

Thermal |

Passive |

Passive |

As you can see both cards are really similar. The just need a single slot. Thus, you can put up to six (or sometimes eight -yes there are a few servers that support eight(!) cards – just check the HCL) cards in one server and have a limited power consumption. The only difference is that the T4 uses the new Turing chip and has doubled Frame Buffer (16 GB). That means you can run 16 VMs each with a 1 GB Frame Buffer on this card. Although I don’t know if the touring chip offers enough performance for that (as I have no testcard until now @NVIDIA) it might be a good option to have 8 VMs with 2 GB Frame Buffer on one card. That would help in many situations where 1 GB Frame Buffer is not enough. In this situation you could only put 4 VMs on one card.

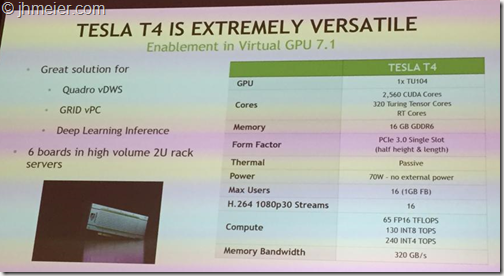

So, at this point I also thought that the T4 is a good evolution of the P4. The only main point I was missing was a price as this could be an argue against the price. But then I attended another session. Here they also showed some details about the T4. There was one detail I noticed quite late and thus I was too late to take a picture. Luckily my friend Tobias Zurstegen made this picture showing the technical information’s:

Here are two details shown I didn’t find somewhere else. First there are the Max Users – wouldn’t be to hard to calculate when you know that the smallest profile is a 1B profile (= 1GB Frame Buffer). But next too that there is the number of H.264 1080p 30 Streams. So, let’s add these points to our list.

|

P4 |

T4 |

|

|

GPU |

Pascal |

Turing |

|

CUDA Cores |

2560 |

2560 |

|

Frame Buffer (Memory) |

8 GB |

16 GB |

|

vGPU Profiles |

1 GB, 2 GB, 4 GB, 8GB |

1 GB, 2 GB, 4 GB, 8GB, 16 GB |

|

Form Factor |

PCIe 3.0 single slot |

PCIe 3.0 single slot

|

|

Max Power |

75 W

|

70 W |

|

Thermal |

Passive |

Passive |

|

Max Users (VMs) |

8 |

16 |

|

H.264 1080p 30 Streams |

24 |

16 |

What’s that? The number of H.264 1080p 30 Streams is lower on a T4 (16) compared to a P4 which has 24 Streams. The T4 has 8 (!) Streams less than a P4?!? If you keep in mind that e.g. in a HDX 3D Pro environment each monitor of a user with activity requires one stream that means that with 8 users having a dual monitor all available Streams can already be used. If you put more users on the same card it might happen that this leads to a performance issue for the users as there not enough H.264 Streams available. Unfortunately, I haven’t found anything on NVIDIAs website that proofs that this number of H.264 Streams is correct and was not just a typo in the slide.

But if it’s trues I am wondering why that happened? What did NVIDIA change thus the number of streams went down and not up. I would have expected at least 32 Streams (compared to the P4). If it was a card design change that would be contra productive. Let us hope it was just a Typo on the slide.

If someone found some other official document which (not) proofs this number please let me know.

Should the number be correct I hope NVIDIA listens again and changes this before the card is released. I see this as a big bottleneck for many environments. Especially as many don’t know about the number of streams and then wonder why they have a bad performance as the graphics chip itself is not under heavy load and they don’t know that to many required H.264 streams can also lead to a poor performance. Next to that keep in mind that it’s now also possible to use H.265 in some environments – but using that leads possible to less streams as it’s encoding is more resource intensive.

It’s been quite a long time since I have written an article for the German magazine IT Administrator. Thus I thought it’s time for another article. You can find it it in the current IT-Administrator. The article describes how you can use Skype for Business in Citrix Environments and benefit from the Citrix RealTime Optimization Pack.

Citrix möchte die reibungslose Nutzung von Skype for Business in VDI-Umgebungen ermöglichen und hat hierfür das Citrix RealTime Optimization Pack veröffentlicht. Wir wollen uns ansehen, wie dieses Plug-in funktioniert und wie Sie es in Ihrer Umgebung implementieren.

When you are using NVIDIA GRID, you might know that NVIDIA started to activate license checking in Version 5.0. This means if no license is available, there are the following restrictions applied to the user:

- Screen resolution is limited to no higher than 1280×1024.

- Frame rate is capped at 3 frames per second.

- GPU resource allocations are limited, which will prevent some applications from running correctly

Why ever NVIDIA has not enabled a Grace Period after a VM started – thus the restrictions are active until the VM successfully checked out a license. This has the effect that a user might connect to a just booted VM his session experience is limited. Furthermore, he has to disconnect and reconnect his session when the NVIDIA GRID license was applied to e.g. work with a higher resolution. Currently I know about three workarounds to fix this issue:

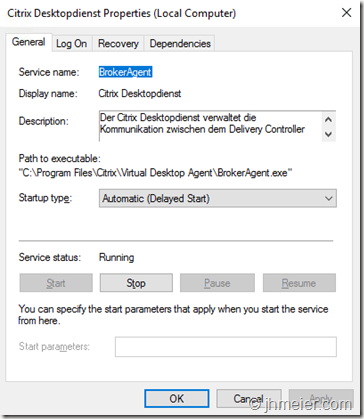

1. Change the Citrix Desktopservice startup to Automatic (Delayed Start)

2. Configure a Settlement Time before a booted VM is used for the affected Citrix Delivery Groups

3. NVIDIA Registry Entry – Available since GRID 5.3 / 6.1

Both workarounds have their limitations – thus I would like to show you both of them.

Changing the Citrix Desktopservice to Automatic (Delayed Start)

When you change the Service to an Automatic (Delayed Start) it will not directly run after the VM booted. This has the result that the VM is later registered at the Delivery Controller – it has time to check out the required NVIDIA license – before the Delivery Controller will broker a session to the VM.

To change the service to an Automatic (Delayed Start) open the Services Console on you master image (Run => services.msc) and open the properties of the Citrix Desktopservice. Change the Startup type to Automatic (Delayed Start) and confirm the change with OK.

Now update your VMs from the Master Image and that’s it. The VM should now have enough time to grab a NVIDIA License before it registers to the Delivery Controller.

The downside of this approach is that with every VDA update / upgrade you need to configure it again. Instead of doing this manually you can run a script with the following command on your maintenance VM before every shutdown. This command changes the Startup type – you cannot forget to change it (Important: There must be a space after “start=”).

sc config BrokerAgent start= delayed-auto

In a PowerShell the command needs to be modified a little bit:

&cmd.exe /c sc config BrokerAgent start= ‘delayed-auto’

Configure a Settlement Time before a booted VM is used

Alternatively, you have the possibility to configure a Settlement Time for a Delivery Group. This means that after the VM has registered to the Delivery Controller no sessions are brokered to the VM during this configured time. Again, the VM has enough time to request the necessary NVIDIA license. Howerver, this approach also a down side – if no other VMs are available users will still be brokered to just booted VMs although the Settlement Time did not end. This means if you didn’t configure enough Standby-VMs to be up and running when many users connect they still might be brokered to a just booted VM (without a license).

To check the currently active Settlement Time for a Delivery Group open a PowerShell and enter the following commands:

Add-PSSnapin Citrix*

Get-BrokerDesktopGroup –Name “DELIVERY GROUP NAME”

Replace the Delivery Group Name with the Name of the Delivery Group you would like to check

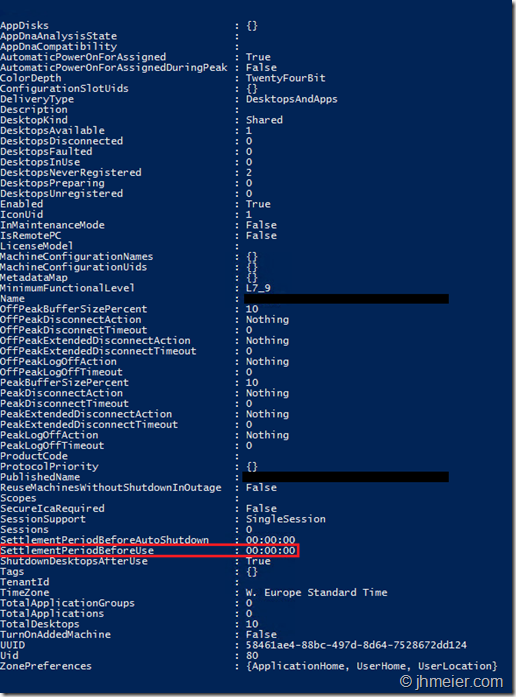

You now get some information’s about the Delivery Group. The interesting point is the SettlementPeriodBeforeUse in the lower area. By default, it should be 00:00:00.

To change this Time enter the following command:

Set-BrokerDesktopGroup –Name “DELIVERY GROUP NAME” –SettlementPeriodBeforeUse 00:05:00

With the above setting, the Settlement Time is changed to 5 Minutes – do not forget to replace the Delivery Group Name with the actual one.

![]()

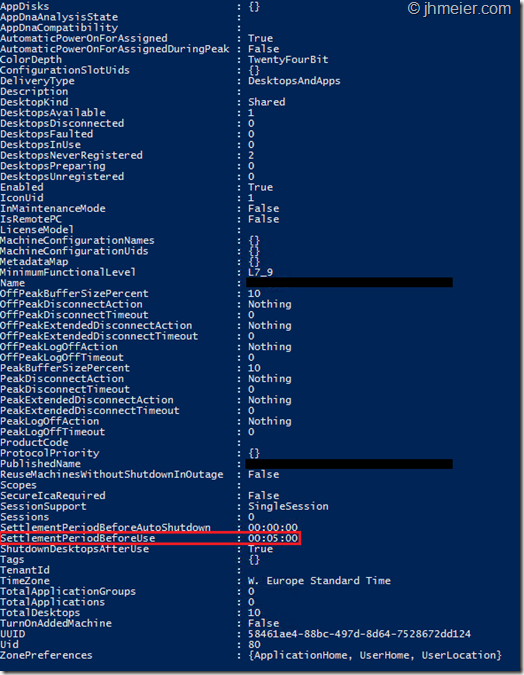

If you now again enter the command to see the settings for your Delivery Group you will notice that the SettlementPeriodBeforeUse was changed.

NVIDIA Registry Entry – Available since GRID 5.3 / 6.1

With GRID Release 5.3 / 6.1 NVIDIA has published a Registry Entry to also fix this. The description is a little bit limited but when I got it correct, it changes the driver behavior – thus that all restrictions are also gone when the license was applied after the session was started. Before you can add the registry setting, you need to install the NVIDIA Drivers in your (Master-) VM and apply a NVIDIA License. When the license was successfully applied, create the following Registry Entry:

Path: HKLM\SOFTWARE\NVIDIA Corporation\Global\GridLicensing

Type: DWORD

Name: IgnoreSP

Value: 1

After creating, you must restart the VM (and update your Machine Catalogs if it is a Master-VM). Honestly I wasn’t able to test this last solution by myself – so I can’t tell if this really fixes the issue all times or only mostly….

That’s it – hope it helps (and NVIDIA will completely fix this issue in one of their next releases).

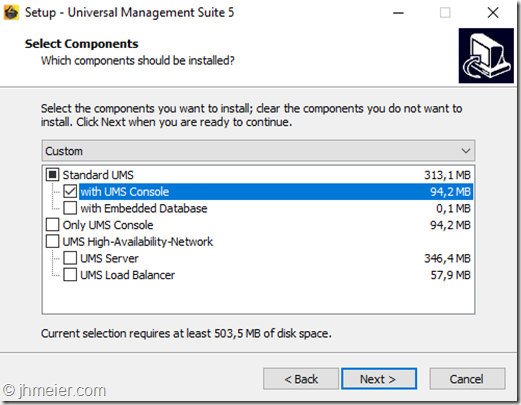

When you reach a point where you manage many IGELThin Clients you might think that it’s quite helpful that the Igel UMS is still available even if the Server with the UMS fails. Furthermore, you can use this to reduce the load on one systems. For example when multiple Clients update their Firmware at the same time, they all download it from the IGELUM Server. To reach this goal you have two options:

1. Buy the IGEL High-Availability Option for UMS (including a Load Balancer)

2. Load Balance two UMS that use the same Database with a Citrix NetScaler

In this blog post, I would like to show you how to realize the second option. Before we can start with the actual configuration, we need to have a look at a few requirements for this configuration.

1. Database Availability

When you create a High Available UMS Server Infrastructure you should also make the required Database also High Available. Otherwise, all configured UMS Servers stop to work when the Database Server failed.

2. Client IP Forwarding

The UMS Servers need to know the actual IP of the Thin Clients. When you would not forward the Client IP all Thin Clients would have the same IP Address. You then won’t be able to send commands to a Thin Client or see their online status. Unfortunately, this leads to another problem. The client connects to the load balanced IP of the UMS Servers. This is forwarded (with the original Client IP) to one UMS Server. This server replies directly to the Thin Client IP. The Thin Client now receives a reply not from the IP Address it initially connected to and ignores the reply. One (easy) way to fix this issue is to put the UMS Servers in a separate Subnet. Add a Subnet IP (SNIP) to the NetScaler in this Subnet and configure this NetScaler SNIP as the Default Gateway for the UMS Servers. When doing this the UMS Servers receive the original Client IP but the reply to the Client still passes the NetScaler which can then replace the Server IP with the load balanced IP Address.

Now let’s start with the actual configuration. The first step is to install the Standard UMS (with UMS Console) on two (or more) Servers.

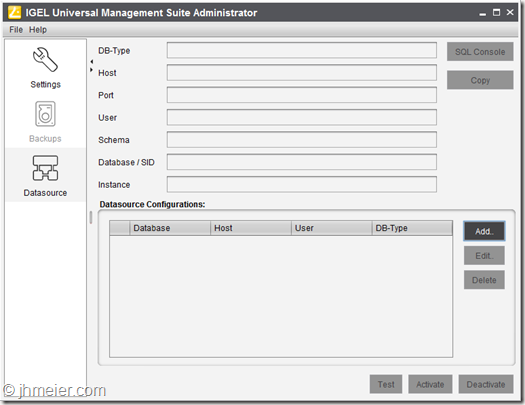

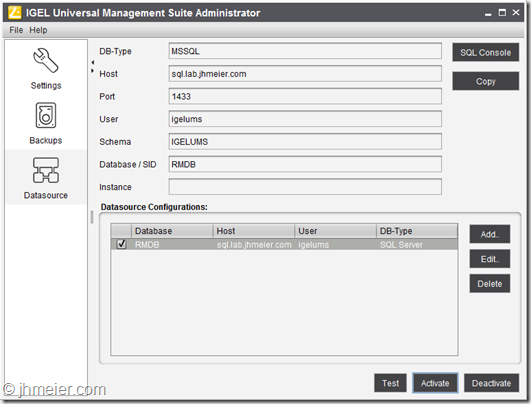

After the installation finished successfully, it’s time to connect the external Database (if you are unsure about some installation steps have a look at the really good Getting Started Guide). Therefore, open the IGEL Universal Management Suite Administrator and select Datasource.

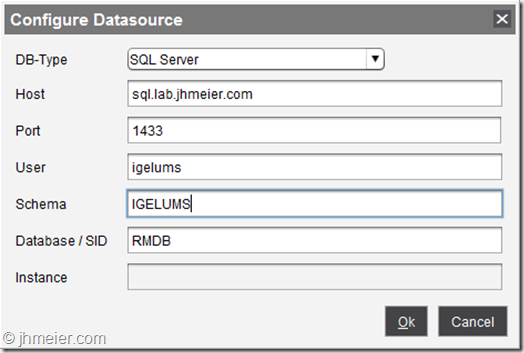

In this example, I will use a Microsoft SQL Always On Cluster – but you can select every type of available Database that offers a high availability option. Of course this is nothing that must be – but how does it help you, when the UMS Servers are high available and the database not? If the database server fails, the UMS would also be down – you still would have a single point of failure.

Enter the Host name, Port, User, Schema and Database Name.

Keep in mind that the database is not automatically created – you have to do this manually before. For a Microsoft SQL Server you can use the following script to create the Database. After creating, the database don’t forget to make it highly available – e.g. using the Always-On function.

If you prefer a different name change rmdb to the required name. Beside that replace setyourpasswordhere with a Password. The user (Create User) and Schema (Create Schema) name can also be changed .

CREATE DATABASE rmdb

GO

USE rmdb

GO

CREATE LOGIN igelums with PASSWORD = ‘setyourpasswordhere’,

DEFAULT_DATABASE=rmdb

GO

CREATE USER igelums with DEFAULT_SCHEMA = igelums

GO

CREATE SCHEMA igelums AUTHORIZATION igelums GRANT CONTROL to igelums

GO

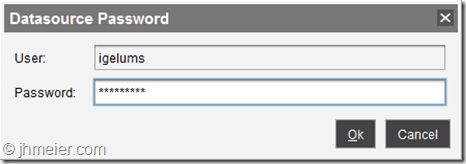

After confirming the connection details, you now see the connection. To enable the connection select Activate and enter the Password of the SQL User.

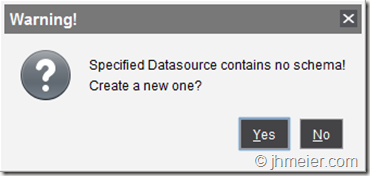

On the first server, you will get the information that there is no schema in the Database that needs to be created. Confirm this with Yes.

You now should see an activated Datasource Configuration. Repeat the same steps on the second UMS Server. Of course, you don’t need to create another Database – just connect to the same Database like with the first server.

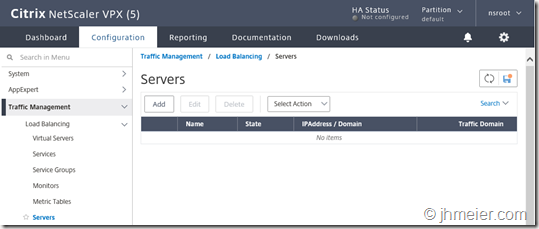

Time to start with the actual Load Balancing configuration on the Citrix NetScaler. Open the Management Website and switch to Configuration => Traffic Management => Servers

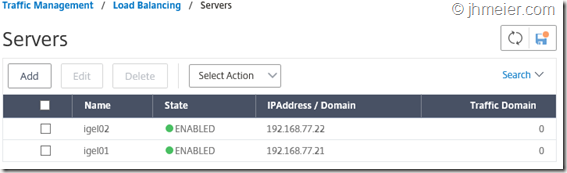

Select Add to create the IGEL UM-Servers. Enter the Name and either the IP Address or Domain Name

Repeat this for all UM-Servers (in my example I added two servers).

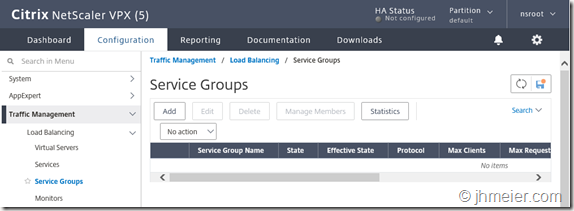

Now we need Services or a Service Group containing / for all UMS Servers. I personally prefer the Service Groups but if you normally use Services this is also possible.

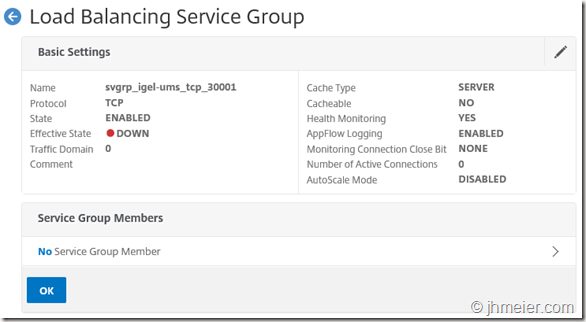

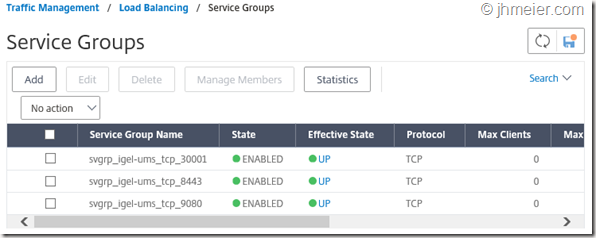

After switching to Service Groups select again Add to create the first UMS Service Group. In total, we need three Service Groups.

Port 30001: Thin Client Connection Port

Port 8443: Console Connection Port

Port 9080: Firmware Updates

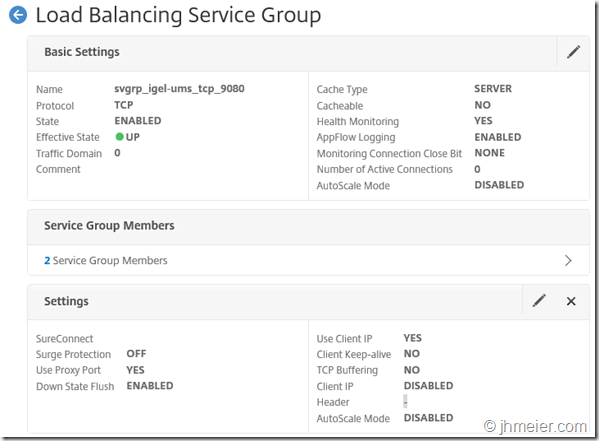

The first one we create is the Service Group for Port 30001. Enter a Name and select TCP as the Protocol. The other settings don’t need to be changed.

Now we need to add the Service Group Members. Select therefore No Service Group Member.

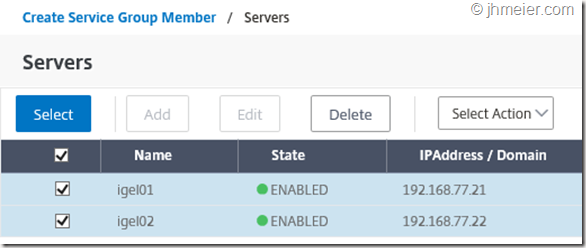

Mark the UMS Servers created in the Servers area and confirm the selection with Select.

Again, enter the Port number 30001 and finish with Create.

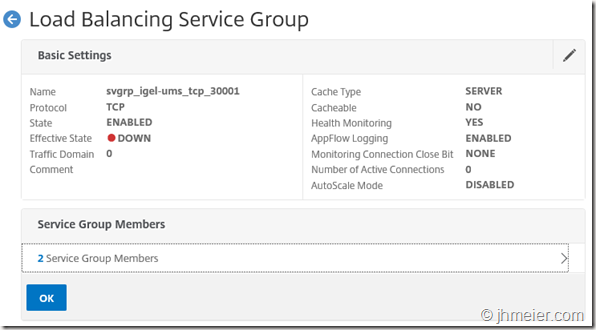

The Service Group now contains two Service Group Members.

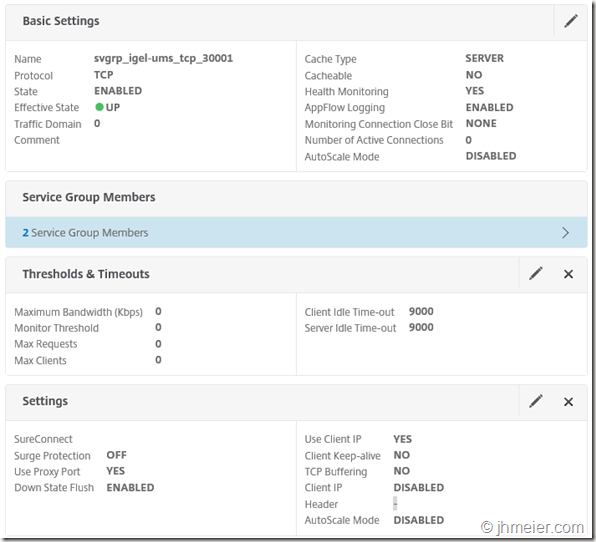

As mentioned at the beginning we need to forward the Client IP to the UMS Servers. Otherwise, every client would have the same IP – the NetScaler Subnet IP. Therefore, edit the Settings (not Basic Settings!) and enable Use Client IP. Confirm the configuration with OK.

That’s it – the Service Group for Port 30001 is now configured.

Repeat the same steps for Port 8443 – but do not enable Use Client IP. Otherwise, you will not be able to connect to the UMS Servers with the load balanced IP / Name inside the IP Range of the UMS Servers itself.

Finally, you need to create a Service Group for Port 9080 – this time you can again forward the Client IP.

At the end, you should have three Service Groups.

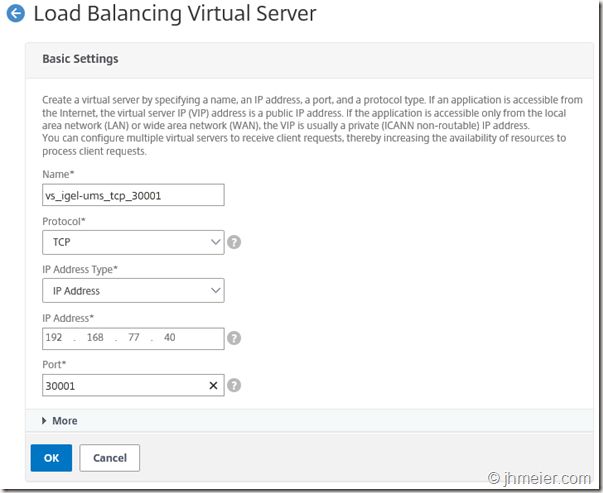

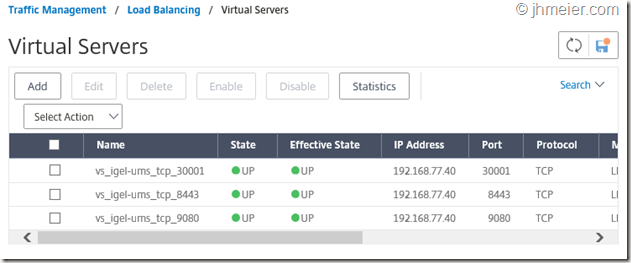

Time to create the actual client connection points – the Virtual Servers (Traffic Management => Load Balancing => Virtual Servers).

Like before select Add to create a new Virtual Server. Again, we need three virtual servers for the Ports 30001, 8443 and 9080.

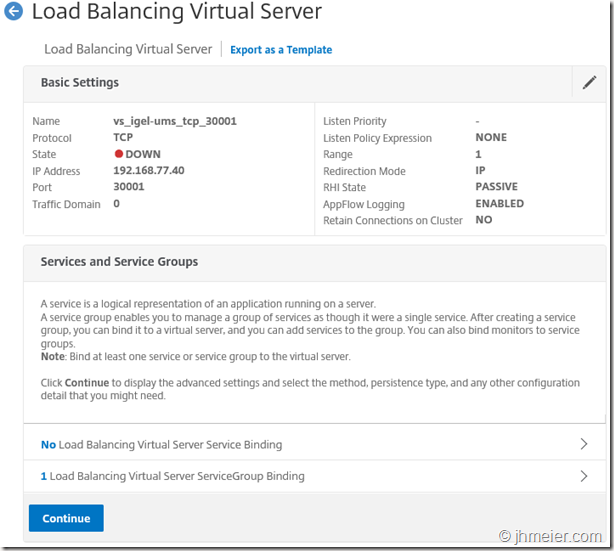

The first Virtual Server we create is for Port 30001. Enter a Name and choose TCP as the Protocol. Furthermore, enter a free IP Address in the separate Subnet of the UM-Servers. The Port is of course 30001.

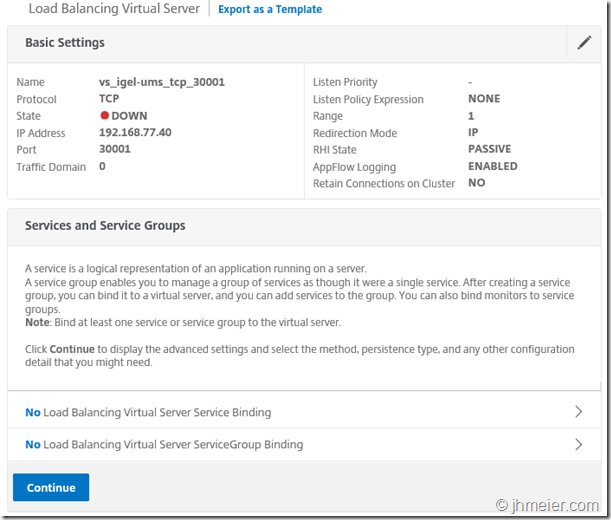

After this, we need to bind the Services or Service Group to this Virtual Server. If you created Services and not a Service Group make sure, you add the Services of all UMS Servers. To add a created Service Group click on No Load Balancing Virtual Server Service Group Binding.

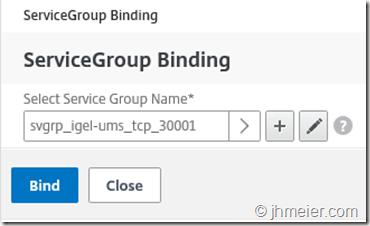

Select the Service Group for Port 30001 and confirm the selection with Bind.

The Service Group is now bound to the Virtual Server. Press Continue to get to the next step.

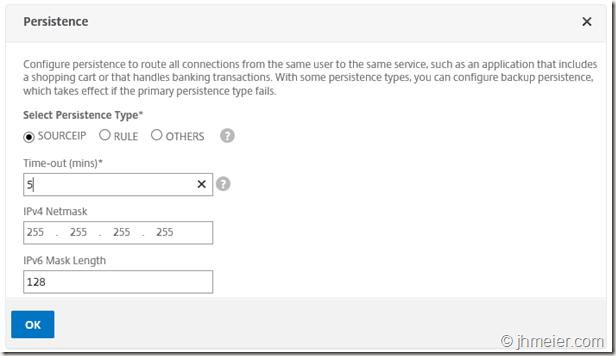

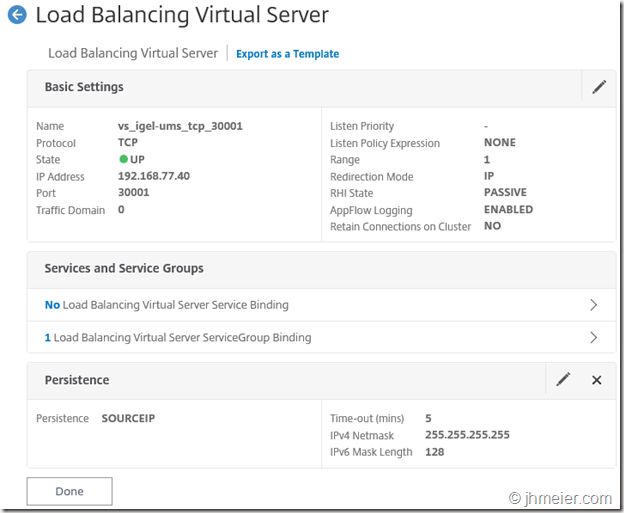

When a client connects, we need to make sure it always connects to the same UMS Server after the initial connection and not flips between them. When a client stopped the connection or a UMS Server failed, it’s of course OK if the client connects to the other UMS Server. Herefore, we need to configure a Persistence. As Persistence Type, we select Source IP and the Time-Out should be changed to 5. IPv4 Netmask is 255.255.255.255. Confirm the Persistence with OK.

Finish the Virtual Server configuration with Done.

Repeat the same steps for the other two ports – thus you have three Virtual Servers at the end. Of course, all need to use the same IP Address.

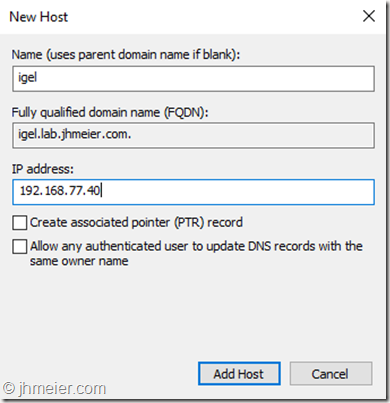

To make it more easy to connect to the Load Balanced UMS Servers it is a good idea to create a DNS-Host-Entry e.g. with the name Igel and the IP address from the Virtual Servers. When you added a DHCP Option or DNS Name for the Thin Client Auto registration / connection change them also to the IP address of the Virtual Servers.

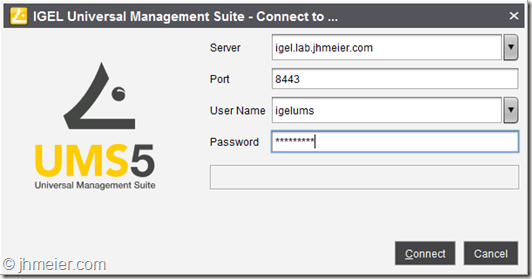

You can now start the IGEL Universal Management Suite and connect to the created Host-Name.

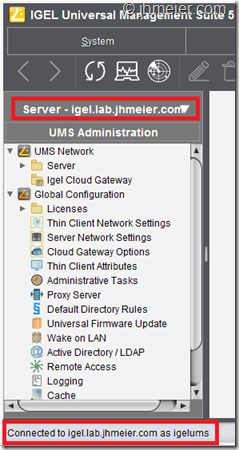

After a successful connection, you can see the used server name in the bottom left area and under the Toolbar.

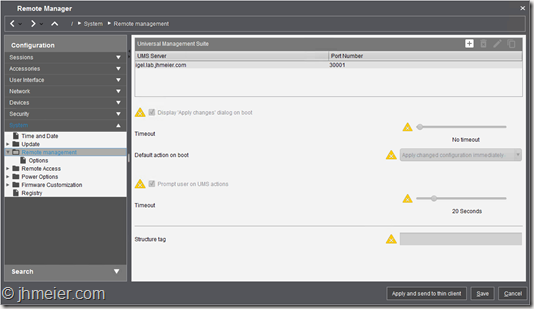

We now need to point the Thin Clients to the new Load Balanced UMS Servers. You need either to modify an existing policy or create a new one. The necessary configuration can be found in the following area:

System => Remote Management => Universal Management Suite (right area).

Modify the existing entry and change it to the created Host name. Save the profile and assign the configuration to your Thin Clients

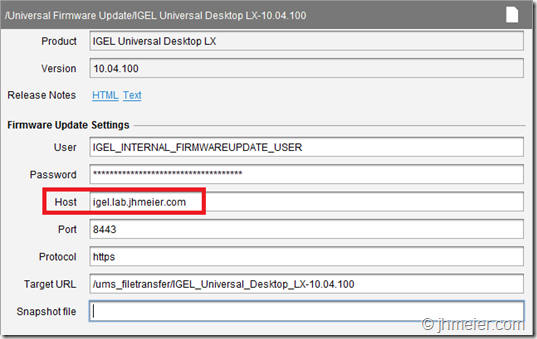

The last step is necessary to allow the Thin Clients to update / download a firmware even when one UMS Server is not available. By default, a Firmware always points to one UMS Server and not the Load Balanced Host name. Therefore, switch to the Firmware area and select one Firmware. Here you can find the Host. Change this to the created Host name and save the settings. Repeat this for all required Firmware’s. If you download a new Firmware make sure you always modify the Host – otherwise a new Firmware will only be available from one UMS Server.

Of course, when you download or import a Firmware using the UMS this is only stored on one of the UMS Servers. To make the Firmware available on both UMS Servers you need to replicate the following folder (if you modified the UMS installation path this would be different):

C:\Program Files (x86)\IGEL\RemoteManager\rmguiserver\webapps\ums_filetransfer

A good way to do this is using DFS. Nevertheless, every replication technology is fine – just make sure (when changing the Host entry for a Firmware) that the Firmware’s are available on both UMS Servers.

That’s it – hope this was helpful for some of you.

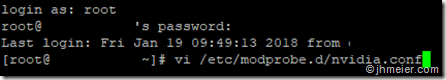

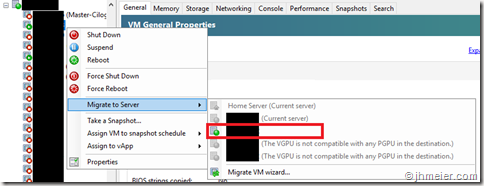

When you install NVIDIA Grid 6.0 on a XenServer you need to manually activate the possibility to Live-Migrate a VM which is using a (v)GPU. Otherwise, you see the following message when you try to migrate a VM:

The VGPU is not compatible with any PGPU in the destination

The steps to enable the migration are described in the Grid vGPU User Guide. To enable the live migration you need to connect to the Console of the XenServer (eg using Putty). Login with the root user. The next step is to edit (create) the nvidia.conf in the folder etc/modprobe.d. Therefore, enter the following command:

vi /etc/modprobe.d/nvidia.conf

Here you need to add the following line:

options nvidia NVreg_RegistryDwords="RMEnableVgpuMigration=1"

Save the change with :wq and restart the Host. Repeat this for all Hosts in the Pool. After restarting you can now migrate a running VM with a (v)GPU on all Hosts that have the changed setting. If you haven’t configured the setting or didn’t reboot the Host only the other Hosts are available as a Target Server.

My book about Citrix XenApp and XenDesktop 7.15 LTSR (German) is finally available in Store. It was a lot of work (and learnings) but also fun to test out some not so often used features. You can find more details here.

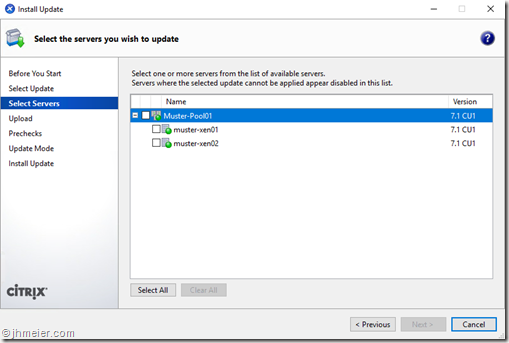

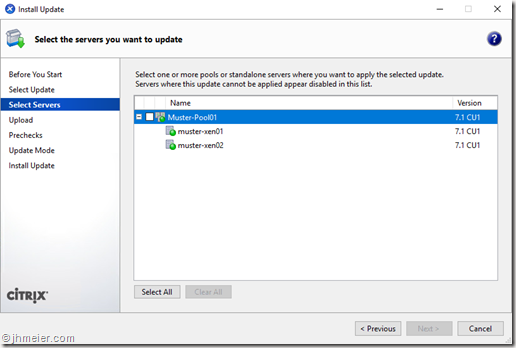

The Citrix XenCenter offers an easy way to update your XenServer (Pools). Furthermore, most updates can nowadays be installed without a reboot. Only a few require a reboot or a XE-Toolstack restart. This process is fully automated. This means that the first host is updated and (if necessary) rebooted. Then the second one follows and so on. To reboot a host the VMs are migrated to another host. This is fine until you use local storage for your VMs. (I don’t want to discuss here if this makes sense or not!) When the VMs are using local storage, they cannot be automatically migrated. When they are deployed using MCS they even can not be migrated manually (the base disk would be still on the initial host). In the past, this was not a problem. You could just put the VMs into maintenance mode and update one host.

Therefore, you started the Install Update Wizard, selected the updates you would like to install and had the possibility to select the host you would like to update:

When the update was finished, you disabled the Maintenance mode on the corresponding VMs and enabled it on the VMs on the next host. After some time the VMs from the second host were not in use any longer and you could update the second host. Since XenCenter 7.2, this is not possible any longer. After selecting an Update, you can only select to update the whole Pool:

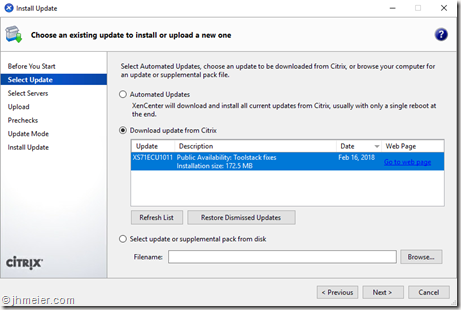

Luckily, there is a small “workaround” to use the XenCenter to download the updates and copy them to the XenServer. (I really like the Update Overview in XenCenter – no hassle to look online which updates are available.) To use this workaround you start the Install Update Wizard and select the Update you would like to install.

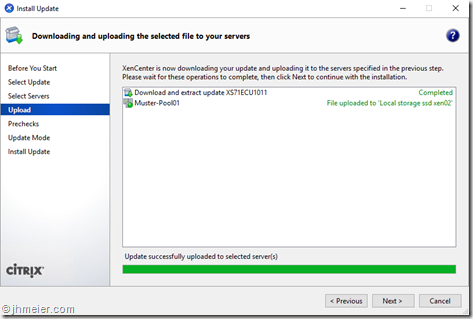

In the next step, select the pool containing the server(s) on which you would like to install the update. Now the update is downloaded and transferred to the pool. It is important that you now do not press Next! When you close this dialog (Cancel), the update will be deleted from the Pool.

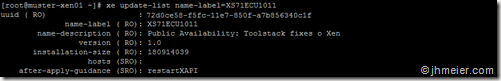

Instead, you need to connect to the console (e.g. using Putty) of a XenServer that is a member of the Pool. Now we need to figure out the UUID of the update. Therefore, you need to enter the following command:

xe update-list name-label=HOTFIXNAME

You can find the Name of the Update in the XenCenter Download Window. For example, Hotfix 11 for XenServer 7.1 CU1 has the Name XS71ECU1011. Copy the UUID and note if something is required after the hotfix is installed (after-apply-guidance). Either this can be a Toolstack restart (restartXAPI) or a host reboot (restartHost).

The next step is to install the Update on the required hosts. This can be achieved with the following command:

xe update-apply host=HOSTNAME uuid=PATCH-UUID

Replace HOSTNAME with the name of the XenServer you would like to update and PATCH-UUID with the copied UUID from the Patch. Repeat the same for all Hosts you would like to update. When the patch was applied, no further message is displayed.

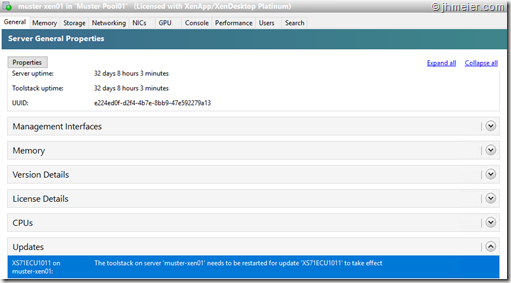

That means you have to remember the after-apply-guidance which was shown with the Update UUID. The good thing is – if you have forgotten that you can check in XenServer if another step is necessary. Just open the General area from the Server. Under Updates you can see if an installed Update requires a Toolstack or Host restart. If a Toolstack or Host restart is necessary, do them to finish installing the update.

That is it – now you know how to install an Update to a single server on a XenServer Pool member. There are just two other things I would like to add.

The first is that you can install multiple updates at the same time. Therefore, you start with the same steps. You select an Update in the XenCenter and continue until it was transferred to the Pool. Now you go back with “Previous” to the Select Update area. Select the next Update and continue like before until the Update was transferred to the XenServer Pool. Repeat this for all updates you would like to install. Remember tha you not close the Update dialog – otherwise the update files will be removed from the Pool and you cannot install them any longer. Now note down the UUID from the updates and install all of them. It is not necessary to reboot after each update (which requires a Reboot of the Host / Restart of the Toolstack). Just install all and if an update (or multiple) require a reboot, reboot once at the end.

The next thing I would like to add is that you can also keep the files on the XenServer. Therefore, you need to kill the XenCenter trough the Task Manager when the Updates have been transferred to the Pool.

Today I would like to show you a current issue when upgrading your Linux Receiver 13.5 to 13.7 or 13.8. This is especially important for you when you are using Thin Clients. With a new Firmware a ThinClient Vendor often also updates the used Receiver Version. For example with one of the last Firmware’s Igel switched the default Receiver to Version 13.7. Unfortunately, it seems that there was a bigger change in the Linux Receiver, which leads to a performance reduction – especially when playing a video. This is a problem because it means that all HDX 3D Pro users are effected (HDX 3D Pro = H264 Stream). To show you the differences between both Receiver versions I created the following Video. Both screens are using the same Hardware / Firmware. Only the Linux Receiver Version was changed.

We currently have a ticket open at Citrix to fix the problem – but there is no solution until now available.